When you begin preparing for modern System Design interviews, you quickly realize that traditional backend systems are no longer the only focus. With the rise of AI-powered applications, LLM inference optimization has become a critical topic that interviewers expect you to understand deeply. It is no longer enough to design scalable APIs, because you are now expected to design intelligent systems that operate efficiently under real-world constraints.

The Shift From Training To Inference

Most discussions around large language models initially focus on training, but in production systems, inference is where the real challenges begin. Training happens offline and infrequently, while inference happens in real time for every user request, which means it directly impacts latency, cost, and user experience.

When you design systems that rely on LLMs, you are essentially building around inference workloads. This makes optimization not just a performance concern but a fundamental System Design requirement.

Why Interviewers Care About Inference Optimization

In System Design interviews, you are often asked to design systems like chatbots, copilots, or AI-powered search engines. These systems rely heavily on LLM inference, and interviewers want to see whether you can balance performance with cost and scalability.

If you propose a design that uses a large model without considering latency or cost implications, it signals that you are thinking at a surface level. On the other hand, when you incorporate optimization strategies naturally into your design, it shows that you understand how real systems operate in production.

The Trade-Off Mindset You Need To Develop

LLM inference optimization is fundamentally about trade-offs, and this is where many candidates struggle. Improving latency may increase cost, while reducing cost may impact model quality, which means every decision requires careful evaluation.

| Optimization Goal | What You Improve | What You Risk |

|---|---|---|

| Lower Latency | Faster responses | Higher infrastructure cost |

| Lower Cost | Reduced compute usage | Slower or lower-quality output |

| Higher Throughput | More requests handled | Increased system complexity |

When you start thinking in terms of these trade-offs, your answers become more aligned with how experienced engineers approach System Design problems.

From Functional Systems To Efficient Systems

Early in your preparation, it feels sufficient to design systems that simply work. However, as you progress, you realize that efficiency is what differentiates strong designs from average ones.

LLM inference optimization forces you to think beyond functionality and consider how your system behaves under load, how much it costs to run, and how it scales over time. This shift in thinking is exactly what interviewers are looking for.

Understanding The LLM Inference Pipeline End-To-End

Before you can optimize anything, you need to understand how LLM inference actually works. Many candidates jump straight into optimization techniques without understanding the pipeline, which leads to shallow or incomplete answers.

What Happens During Inference

At a high level, inference involves taking an input prompt, processing it through a model, and generating an output. While this sounds simple, each step involves multiple stages that contribute to latency and cost.

Understanding these stages helps you identify where bottlenecks occur and where optimization efforts should be focused.

Breaking Down The Inference Pipeline

The LLM inference pipeline can be divided into distinct stages, each with its own performance characteristics and optimization opportunities.

| Stage | What Happens | Optimization Opportunity |

|---|---|---|

| Tokenization | Input text is converted into tokens | Reduce input size |

| Model Execution | Tokens pass through the model | Use better hardware or smaller models |

| Decoding | Tokens are generated step by step | Optimize sampling strategies |

| Post-Processing | Output is formatted or filtered | Minimize unnecessary work |

When you understand this breakdown, you begin to see that optimization is not a single step but a combination of improvements across the pipeline.

Why Tokenization And Decoding Matter

Many candidates focus only on model execution, but tokenization and decoding also play a significant role in performance. Long prompts increase tokenization time and memory usage, while inefficient decoding strategies can slow down response generation.

When you mention these stages in interviews, it shows that you have a deeper understanding of the system rather than just surface-level knowledge.

Identifying Bottlenecks In Real Systems

In real-world systems, bottlenecks often occur in unexpected places. For example, a system might be limited by GPU memory rather than compute, or by decoding latency rather than model size.

By analyzing each stage of the pipeline, you can pinpoint these bottlenecks and apply targeted optimizations. This structured approach is what interviewers expect to see when you discuss inference systems.

Key Metrics: Latency, Throughput, And Cost

Once you understand the inference pipeline, the next step is defining what you are optimizing for. LLM inference optimization revolves around a few key metrics, and your ability to reason about these metrics is critical in interviews.

Why Metrics Drive Optimization Decisions

Without clear metrics, optimization becomes guesswork. Metrics provide a way to measure performance and evaluate whether your changes are actually improving the system.

When you design systems, you should always start by identifying which metrics matter most for your use case. This ensures that your optimization efforts are aligned with real requirements.

Understanding Latency In LLM Systems

Latency refers to how long it takes for a system to respond to a request, and in LLM applications, it directly affects user experience. High latency can make applications feel slow and unresponsive, which is unacceptable for interactive systems.

Latency is often measured using percentiles such as p50, p95, and p99, which provide a more accurate picture of performance under different conditions.

| Latency Metric | What It Represents | Why It Matters |

|---|---|---|

| p50 | Median response time | Typical user experience |

| p95 | 95th percentile latency | Performance under load |

| p99 | Worst-case latency | Reliability at scale |

Understanding these metrics helps you design systems that perform consistently, not just on average.

Throughput And System Capacity

Throughput measures how many requests your system can handle within a given time frame. In LLM systems, increasing throughput often involves batching requests or optimizing resource usage.

However, increasing throughput can sometimes increase latency, which creates a trade-off that you need to manage carefully. This is why balancing metrics is a key part of System Design.

Cost As A First-Class Metric

Unlike traditional systems, LLM inference introduces significant compute costs, especially when using large models. This makes cost a first-class metric that must be considered alongside latency and throughput.

| Metric | What It Measures | Impact |

|---|---|---|

| Latency | Response time | User experience |

| Throughput | Requests per second | System scalability |

| Cost | Compute per request | Business viability |

When you treat cost as a core metric, your designs become more realistic and aligned with business constraints.

The Interplay Between Metrics

One of the most important things to understand is that these metrics are interconnected. Improving one metric often affects the others, which means optimization is always about finding the right balance.

In interviews, being able to explain these trade-offs clearly is often more important than proposing a perfect solution.

Model Selection And Size Trade-Offs

One of the earliest and most impactful decisions you make in an LLM system is choosing the model. This decision often determines your baseline performance, cost, and scalability, which makes it a critical part of inference optimization.

Why Model Choice Is The First Optimization Step

Many candidates focus on optimizing infrastructure or pipelines, but the most effective optimization often comes from choosing the right model. A smaller or more efficient model can reduce latency and cost significantly without requiring complex optimizations.

This is why experienced engineers treat model selection as a foundational decision rather than an afterthought.

Comparing Different Model Sizes

LLMs come in various sizes, each with its own trade-offs in terms of performance, cost, and resource requirements.

| Model Type | Advantage | Trade-Off |

|---|---|---|

| Large Models | High accuracy and quality | High latency and cost |

| Medium Models | Balanced performance | Moderate cost |

| Small Models | Fast and efficient | Lower quality |

Understanding these differences helps you choose a model that aligns with your system requirements.

Distilled And Quantized Models

In addition to model size, techniques like distillation and quantization can significantly impact performance. Distilled models are smaller versions of larger models that retain much of their functionality, while quantized models use lower precision to reduce resource usage.

These techniques allow you to achieve better efficiency without drastically sacrificing quality, which is often a key consideration in production systems.

Matching Models To Use Cases

Different applications have different requirements, which means there is no one-size-fits-all model. For example, a real-time chatbot may prioritize low latency, while a research tool may prioritize accuracy.

When you align your model choice with your use case, you create a strong foundation for further optimization. This approach also demonstrates to interviewers that you are making thoughtful, context-driven decisions.

Interview Insight: Choosing The Right Model

In interviews, starting with model selection shows that you understand where the biggest gains come from. Instead of jumping into complex optimizations, you demonstrate that you can solve problems at the right level.

This ability to prioritize decisions is what separates strong candidates from those who rely on memorized techniques.

Hardware Acceleration And Infrastructure Choices

As you move deeper into LLM inference optimization, you begin to realize that software-level improvements are only part of the story. The underlying hardware and infrastructure you choose play a massive role in determining performance, cost, and scalability. This is why strong System Design answers always include thoughtful infrastructure decisions.

Why Hardware Matters For LLM Inference

LLMs are compute-intensive by nature, and their performance depends heavily on how efficiently you can execute matrix operations. CPUs can handle inference workloads, but they are often not optimized for the parallel computations required by large models.

This is where specialized hardware comes into play, allowing you to significantly reduce latency and improve throughput. When you understand these differences, you start making infrastructure decisions that align with your system’s requirements.

Comparing Infrastructure Options

Different hardware options offer different trade-offs in terms of cost, performance, and flexibility. Choosing the right one depends on your workload characteristics and budget constraints.

| Hardware Type | Strength | Trade-Off |

|---|---|---|

| CPU | Low cost and flexible | Slower for large models |

| GPU | High parallel performance | Expensive and limited availability |

| TPU | Optimized for ML workloads | Less flexible ecosystem |

| AWS Inferentia | Cost-efficient inference | Requires specific integration |

When you explain these trade-offs in interviews, it shows that you are thinking beyond code and considering real deployment environments.

Single-Node Vs Distributed Inference

Another important decision is whether to run inference on a single machine or distribute it across multiple nodes. Single-node setups are simpler and easier to manage, but they may not scale for large workloads.

Distributed inference allows you to handle larger models and higher traffic, but it introduces complexity in coordination and communication. This is a classic System Design trade-off where simplicity competes with scalability.

Memory And Bandwidth Constraints

In many cases, inference performance is limited not by compute but by memory and data movement. Large models require significant memory, and inefficient data transfer can become a bottleneck.

When you mention memory constraints in interviews, it signals that you understand low-level system behavior. This level of detail often distinguishes strong candidates from those who focus only on high-level design.

Batching And Dynamic Batching Strategies

Batching is one of the most powerful techniques for improving LLM inference efficiency. It allows you to process multiple requests together, which increases hardware utilization and improves throughput. However, batching also introduces trade-offs that you need to manage carefully.

What Batching Actually Does

At its core, batching combines multiple input requests into a single computation. Instead of running inference separately for each request, you process them together, which reduces overhead and improves efficiency.

This approach is especially effective when using GPUs, as they are designed to handle parallel workloads. By batching requests, you make better use of available resources.

Static Vs Dynamic Batching

There are different ways to implement batching, and each comes with its own trade-offs. Static batching groups requests into fixed-size batches, while dynamic batching adjusts batch sizes based on incoming traffic.

| Batching Type | Advantage | Trade-Off |

|---|---|---|

| Static Batching | Simple and predictable | May waste capacity |

| Dynamic Batching | Better utilization | Increased latency variability |

Dynamic batching is often used in production systems because it adapts to real-time conditions, but it requires more sophisticated implementation.

The Latency Vs Throughput Trade-Off

Batching improves throughput but can increase latency because requests may need to wait until a batch is formed. This creates a trade-off that you need to balance based on your application’s requirements.

For example, a real-time chatbot may prioritize low latency and use smaller batches, while a background processing system may prioritize throughput and use larger batches. Being able to articulate this trade-off is critical in interviews.

Real-World Insight: Production Systems

Many large-scale AI systems use dynamic batching to optimize performance. By grouping requests intelligently, they achieve high throughput while keeping latency within acceptable limits.

When you reference this approach in interviews, it demonstrates that you understand how modern AI systems operate in production environments.

Caching Strategies For LLM Systems

Caching is one of the most effective ways to reduce both latency and cost in LLM systems. Instead of recomputing results for every request, you store and reuse previous outputs, which can significantly improve performance.

Why Caching Is So Powerful

LLM inference is expensive because it involves running complex computations for each request. If similar requests occur frequently, recomputing results becomes inefficient.

Caching allows you to avoid this redundancy by storing results and serving them directly when the same or similar request is received. This reduces both compute usage and response time.

Types Of Caching In LLM Systems

There are multiple caching strategies that you can apply depending on the nature of your application. Each type addresses a different aspect of redundancy in LLM inference.

| Cache Type | What It Stores | Benefit |

|---|---|---|

| Prompt Cache | Repeated input prompts | Avoids recomputation |

| Response Cache | Generated outputs | Faster responses |

| Embedding Cache | Vector representations | Reduces preprocessing cost |

Understanding these different types helps you design more efficient systems.

When Caching Works Best

Caching is most effective when there is a high degree of repetition in requests. For example, frequently asked questions in a chatbot or repeated queries in a search system are ideal candidates for caching.

However, caching becomes less effective when requests are highly unique or dynamic. Recognizing these scenarios helps you decide when to invest in caching strategies.

Interview Insight: Reducing Inference Cost

In interviews, caching is often discussed as a way to reduce both cost and latency. When you mention caching strategies, you show that you are thinking about efficiency at a system level.

This also demonstrates that you understand how to optimize systems without relying solely on hardware or model changes.

Token Optimization And Prompt Engineering

One of the most overlooked aspects of LLM inference optimization is the role of tokens. Since most LLMs process and charge based on tokens, optimizing how you structure prompts can have a direct impact on both cost and performance.

Why Tokens Matter In LLM Systems

Every request to an LLM involves processing tokens, which represent pieces of text. The number of tokens directly affects both latency and cost, as more tokens require more computation.

When you reduce the number of tokens, you reduce the amount of work the model needs to perform. This makes token optimization one of the simplest yet most effective strategies.

Reducing Prompt Length

One way to optimize tokens is by shortening prompts without losing essential context. This involves removing unnecessary information and focusing only on what the model needs to generate accurate responses.

Shorter prompts not only reduce cost but also improve latency, making your system more efficient overall.

Prompt Compression Techniques

In more advanced systems, you can apply techniques to compress prompts while preserving their meaning. This might involve summarizing previous context or using structured formats to reduce redundancy.

| Technique | Purpose | Impact |

|---|---|---|

| Prompt Trimming | Remove unnecessary text | Lower cost and latency |

| Context Summarization | Compress conversation history | Efficient long sessions |

| Template Prompts | Standardize inputs | Consistent performance |

These techniques allow you to manage token usage more effectively in complex applications.

System Tokens Vs User Tokens

Another important distinction is between system-level tokens and user-generated tokens. System tokens often include instructions or context that guide the model’s behavior, while user tokens represent the actual input.

Balancing these two types of tokens is important because excessive system prompts can increase cost without adding significant value. Understanding this balance helps you design more efficient interactions.

Interview Insight: Optimizing At The Input Level

In interviews, discussing token optimization shows that you are thinking about efficiency at every stage of the system. Instead of focusing only on infrastructure or models, you are optimizing the inputs themselves.

This holistic approach demonstrates a deeper understanding of LLM systems and sets you apart as a candidate who can design truly efficient solutions.

Quantization And Model Compression Techniques

As you move into more advanced optimization strategies, you begin to explore ways to make models themselves more efficient. Instead of only optimizing infrastructure or requests, you reduce the computational complexity of the model, which can lead to significant improvements in both cost and latency. This is where quantization and model compression techniques become highly relevant.

What Quantization Does To A Model

Quantization reduces the precision of the numbers used in a model, which lowers memory usage and speeds up computation. Instead of using high-precision formats like FP32, you can use formats like INT8 or even INT4, which require fewer resources.

This change allows models to run faster and consume less memory, making them more suitable for production environments. However, this comes with a trade-off, as reducing precision can slightly impact model accuracy.

Comparing Quantization Levels

Different levels of quantization offer varying degrees of efficiency and accuracy. Choosing the right level depends on how much performance you are willing to trade for efficiency.

| Quantization Type | Benefit | Trade-Off |

|---|---|---|

| FP16 | Faster computation | Minor accuracy loss |

| INT8 | Significant speedup and memory savings | Moderate accuracy impact |

| INT4 | Maximum efficiency | Higher accuracy degradation |

Understanding these differences helps you make informed decisions based on your system’s requirements.

Model Compression Techniques Beyond Quantization

In addition to quantization, techniques like pruning and distillation can further reduce model size and complexity. Pruning removes unnecessary parameters, while distillation transfers knowledge from a large model to a smaller one.

These approaches allow you to maintain acceptable performance while significantly reducing resource requirements. This makes them particularly useful for large-scale deployments where efficiency is critical.

When To Use Compression Techniques

Compression techniques are most effective when you are deploying models at scale or operating under strict cost constraints. For example, mobile or edge deployments often require smaller models due to limited resources.

In interviews, discussing these techniques shows that you understand how to optimize systems at a deeper level. It also demonstrates that you can balance performance with practical constraints.

Parallelism And Scaling Strategies

As your system grows, optimizing a single instance is no longer sufficient. You need to scale your inference system to handle higher traffic and larger models, which introduces new challenges and opportunities.

Why Scaling Is Essential For LLM Systems

LLM-powered applications often experience high demand, especially in consumer-facing products. Without proper scaling strategies, your system can become a bottleneck, leading to increased latency and poor user experience.

Scaling ensures that your system can handle growing workloads while maintaining performance. This is a core requirement in System Design interviews.

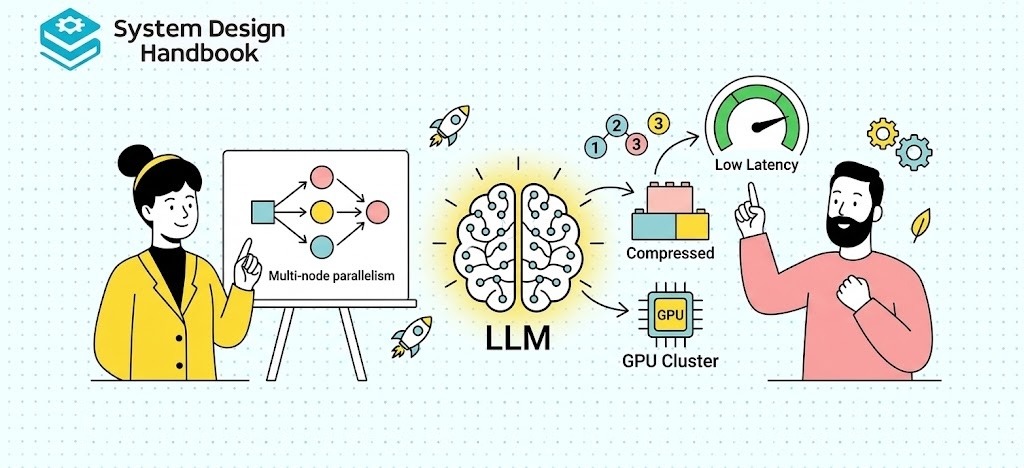

Types Of Parallelism In LLM Systems

There are multiple ways to distribute workloads across resources, each with its own benefits and complexities.

| Parallelism Type | What It Does | Use Case |

|---|---|---|

| Data Parallelism | Splits requests across multiple instances | High traffic systems |

| Model Parallelism | Splits model across devices | Very large models |

| Pipeline Parallelism | Processes stages in sequence across devices | Complex architectures |

Each approach addresses a different scaling challenge, and understanding when to use each is critical.

Trade-Offs In Scaling Strategies

Scaling introduces trade-offs related to complexity, cost, and coordination. While adding more machines can improve throughput, it also increases infrastructure costs and operational overhead.

For example, model parallelism allows you to run large models but requires careful synchronization between devices. Being able to explain these trade-offs clearly is a key part of strong interview answers.

Designing For Multi-Tenant Systems

In real-world applications, your system may need to serve multiple users or clients simultaneously. This introduces additional challenges, such as resource isolation and fair usage.

When you design multi-tenant systems, you need to ensure that no single user negatively impacts others. This often involves implementing rate limiting, resource allocation strategies, and efficient scheduling.

Interview Insight: Thinking At Scale

In interviews, scaling discussions are where candidates often stand out. When you can explain how your system evolves from a single instance to a distributed architecture, you demonstrate a deep understanding of System Design principles.

This ability to think at scale is essential for designing modern AI systems.

Handling Real-World Constraints And Trade-Offs

At this stage, you have learned multiple optimization techniques, but real-world systems rarely allow you to optimize everything perfectly. You need to make decisions based on constraints, which is where engineering judgment becomes critical.

Balancing Latency, Cost, And Quality

One of the most important challenges in LLM inference optimization is balancing latency, cost, and output quality. Improving one aspect often impacts the others, which means you need to prioritize based on your application’s needs.

| Constraint | What You Gain | What You Sacrifice |

|---|---|---|

| Lower Latency | Faster responses | Higher compute cost |

| Lower Cost | Reduced spending | Potential latency increase |

| Higher Quality | Better outputs | Increased resource usage |

Understanding these trade-offs helps you design systems that align with both technical and business goals.

Adapting To Different Use Cases

Different applications require different optimization strategies. For example, a real-time assistant prioritizes low latency, while a batch processing system prioritizes cost efficiency.

When you tailor your approach to the specific use case, your design becomes more practical and effective. This adaptability is something interviewers value highly.

Dealing With Unpredictable Workloads

Real-world systems often experience unpredictable traffic patterns, which makes optimization more challenging. You need to design systems that can handle spikes in demand without significantly increasing costs.

This often involves combining multiple strategies such as batching, scaling, and caching. By doing so, you create a system that is both resilient and efficient.

Operational Constraints And Monitoring

Optimization does not stop after deployment, as systems need to be continuously monitored and adjusted. Metrics such as latency, throughput, and cost must be tracked to ensure that your system remains efficient over time.

When you mention monitoring and iteration in interviews, it shows that you understand the full lifecycle of a system rather than just its initial design.

How To Answer LLM Inference Optimization Questions In Interviews

Understanding concepts is important, but being able to communicate them effectively is what ultimately determines your success in interviews. This section focuses on how to structure your answers in a way that demonstrates clarity, depth, and practical thinking.

A Structured Approach To Answering Questions

When faced with an inference optimization question, you should begin by understanding the problem and defining the requirements. This includes identifying the expected traffic, latency constraints, and cost considerations.

Once you have this context, you can propose a solution and explain how different optimization techniques address specific challenges. This structured approach makes your answer more coherent and convincing.

Identifying Bottlenecks Before Optimizing

A common mistake is jumping straight into optimization techniques without identifying the bottlenecks. Instead, you should analyze the system to determine where the main inefficiencies lie.

For example, the bottleneck might be in model execution, memory usage, or request handling. By addressing the root cause, you demonstrate a thoughtful and systematic approach.

Combining Multiple Optimization Techniques

In real systems, no single technique is sufficient to achieve optimal performance. You need to combine multiple strategies, such as batching, caching, and hardware acceleration to achieve the desired results.

When you explain how these techniques work together, it shows that you understand the system as a whole rather than focusing on isolated components.

Communicating Trade-Offs Clearly

One of the most important aspects of your answer is how well you communicate trade-offs. Interviewers are less interested in perfect solutions and more interested in how you think about compromises.

When you clearly explain why you chose a particular approach and what trade-offs it involves, you demonstrate engineering maturity and decision-making ability.

Using structured prep resources effectively

Use Grokking the System Design Interview on Educative to learn curated patterns and practice full System Design problems step by step. It’s one of the most effective resources for building repeatable System Design intuition.

You can also choose the best System Design study material based on your experience:

Final Thoughts

LLM inference optimization is not just a technical topic but a reflection of how you think as an engineer. It requires you to balance performance, cost, and scalability while making decisions that align with real-world constraints.

As you continue practicing System Design, you will find that these concepts become second nature. Instead of focusing on individual techniques, you begin to think in terms of systems, trade-offs, and long-term efficiency.

If you approach interviews with this mindset, your answers will naturally stand out. You will not only demonstrate technical knowledge but also show that you can design systems that are practical, efficient, and ready for production.