If you have been around software engineering long enough, you know that System Design trends change faster than programming languages. What does not change is the need for strong fundamentals. In 2026, distributed systems are more cloud-native, AI-assisted, and globally scaled than ever, yet every successful architecture is still built on the same System Design building blocks.

Whether you are preparing for System Design interviews, building production-grade systems, or reviewing an existing architecture, understanding these building blocks helps you reason clearly under constraints. Tools evolve, frameworks come and go, but the principles behind scalability, reliability, and maintainability remain consistent.

This guide breaks down System Design building blocks in a structured, modern way. Instead of quick definitions, you will see how each component fits into real systems, what trade-offs matter today, and how these blocks interact when systems scale.

What Are System Design Building Blocks?

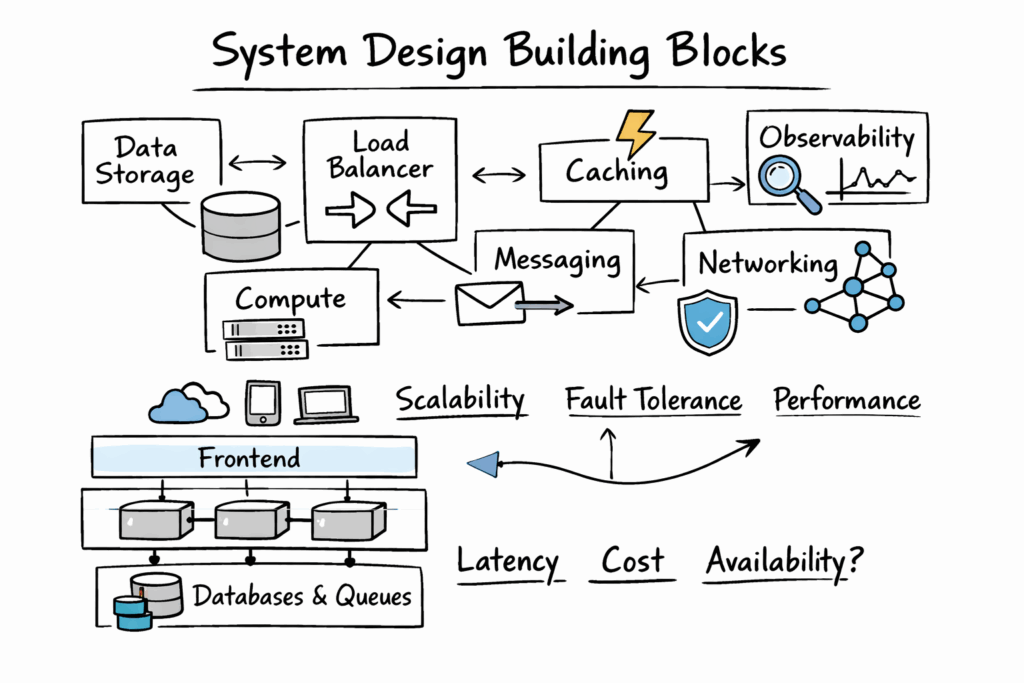

System Design building blocks are the foundational components and concepts used to construct large-scale software systems. It is essential to learn these, especially if you are learning System Design from scratch. Each block solves a specific class of problems, such as data storage, communication, scalability, fault tolerance, or performance optimization.

In modern architectures, these blocks are rarely used in isolation. A production system is usually a composition of multiple building blocks, layered together based on functional requirements and non-functional constraints like latency, cost, and availability.

To ground the discussion, the table below summarizes the most common System Design building blocks you will encounter in 2026.

| Building Block Category | Primary Purpose | Typical Examples |

| Load Distribution | Traffic management | Load balancers, API gateways |

| Compute | Business logic execution | Microservices, serverless functions |

| Data Storage | Persistent data management | SQL, NoSQL, object storage |

| Caching | Performance optimization | Redis, CDN caches |

| Messaging | Asynchronous communication | Queues, event streams |

| Networking | Service communication | REST, gRPC, service mesh |

| Reliability | Fault tolerance | Replication, retries, circuit breakers |

| Observability | System visibility | Logging, metrics, tracing |

Each of these categories plays a distinct role in System Design fundamentals, and understanding when and how to use them is what separates solid designs from fragile ones.

Load Balancing As A Foundational Block

At scale, no system can rely on a single server. Load balancing is one of the earliest System Design building blocks you encounter when traffic grows beyond a single instance.

A load balancer distributes incoming requests across multiple backend services. In 2026, load balancing is rarely just round-robin. Modern systems use intelligent routing based on latency, geographic location, and service health.

Load balancers today often live at multiple layers. Edge-level load balancing happens at CDNs or cloud front doors, while internal load balancing routes traffic between microservices. API gateways often combine request routing, authentication, and throttling, making them an extension of the load balancing layer.

From a design perspective, load balancing directly impacts availability and scalability. Poor load-balancing strategies can lead to hot spots, cascading failures, or uneven resource utilization. That is why it remains a critical System Design building block even as infrastructure becomes more abstracted.

Compute Layer: Where Business Logic Lives

The compute layer is where your system actually does work. This includes services, functions, background workers, and scheduled jobs. In 2026, this layer is more diverse than ever, ranging from containerized microservices to fully serverless architectures.

Microservices remain popular because they allow independent scaling and deployment. However, they introduce complexity around service communication and data consistency. Serverless compute simplifies infrastructure management but shifts complexity into execution limits, cold starts, and observability challenges.

From a System Design building blocks perspective, compute is never evaluated alone. It is always considered in relation to data access patterns, traffic spikes, and operational constraints. Choosing the wrong compute model can create hidden bottlenecks even if everything looks fine on paper.

A useful way to compare compute approaches is shown below.

| Compute Model | Strengths | Trade-Offs |

| Monolith | Simple deployment, low latency | Limited scalability, tight coupling |

| Microservices | Independent scaling, flexibility | Operational complexity |

| Serverless | Minimal ops overhead, auto-scaling | Cold starts, execution limits |

Understanding these trade-offs is essential when assembling System Design building blocks into a coherent architecture.

Data Storage As A Core Building Block

Data storage is one of the most impactful System Design building blocks because it influences performance, scalability, and consistency across the entire system.

In 2026, data storage is rarely a single database. Systems often use polyglot persistence, combining relational databases for transactional data, NoSQL stores for scale, and object storage for large blobs.

Choosing a data store is not about popularity. It is about access patterns, consistency requirements, and failure tolerance. A relational database excels at complex queries and strong consistency. A NoSQL database handles massive write throughput and horizontal scaling. Object storage optimizes cost and durability for unstructured data.

The table below highlights common storage options and when they fit best.

| Storage Type | Best Use Case | Key Characteristics |

| Relational DB | Transactions, joins | ACID, structured schema |

| NoSQL DB | High-scale workloads | Flexible schema, horizontal scaling |

| Object Storage | Media, backups | High durability, low cost |

As a System Design building block, storage decisions are often irreversible without a major migration effort. That is why experienced engineers spend disproportionate time validating data assumptions early.

Caching For Performance And Cost Control

Caching is one of the most misunderstood System Design building blocks. It is often introduced as a performance optimization, but in modern systems, it is equally important for cost control.

Caches reduce load on databases and downstream services. In 2026, caching happens at multiple layer,s including browser caches, CDNs, edge caches, and in-memory data stores like Redis.

However, caching introduces complexity around data freshness and invalidation. Designing an effective caching strategy requires understanding which data can tolerate staleness and which cannot. Over-caching sensitive data can lead to correctness issues, while under-caching leads to unnecessary infrastructure costs.

Caching is most effective when paired with clear data ownership and expiration strategies. That is why it remains a key System Design building block rather than a last-minute optimization.

Messaging And Asynchronous Communication

As systems scale, synchronous communication becomes a liability. Messaging is a System Design building block that enables decoupling between producers and consumers.

Queues, pub-sub systems, and event streams allow systems to handle spikes gracefully and recover from partial failures. In 2026, event-driven architectures are increasingly common, especially in systems that integrate AI workflows, analytics, and third-party services.

Messaging systems introduce trade-offs around delivery guarantees, ordering, and duplication. Engineers must choose between at-most-once, at-least-once, or exactly-once semantics depending on the use case.

The table below shows how messaging patterns differ in practice.

| Pattern | Typical Use | Key Trade-Off |

| Queue | Task processing | Ordering, retries |

| Pub-Sub | Fan-out events | Event duplication |

| Stream | Real-time analytics | Storage and replay costs |

Messaging as a System Design building block enables scalability, but only when its operational costs are well understood.

Networking And Communication Protocols

Networking is often invisible until it breaks. As a System Design building block, it defines how services talk to each other and how failures propagate.

REST remains widely used, but gRPC and asynchronous protocols are increasingly preferred for internal service communication due to performance and schema enforcement. Service meshes add observability and traffic control but increase operational complexity.

In 2026, zero-trust networking and encrypted service-to-service communication are standard expectations rather than advanced features. Network design decisions directly influence latency, security, and debuggability.

Reliability And Fault Tolerance

No system is perfect, so resilience is a mandatory System Design building block. Reliability mechanisms include replication, retries, timeouts, circuit breakers, and graceful degradation.

The goal is not to prevent failure, but to control how failure impacts users. In large systems, partial failure is normal. A well-designed system isolates failures instead of amplifying them.

Modern architectures often rely on redundancy across regions and availability zones. While cloud platforms simplify this, engineers must still design for data consistency, failover behavior, and recovery time objectives.

Observability As A First-Class Building Block

In 2026, observability is no longer optional. Logging, metrics, and tracing form a System Design building block that enables engineers to understand system behavior in real time.

Without observability, scaling systems becomes guesswork. Distributed tracing helps identify bottlenecks across services, while metrics provide early warning signals for saturation or failure.

Well-designed observability systems balance detail with cost. Over-instrumentation can be as harmful as under-instrumentation, especially at scale.

How System Design Building Blocks Work Together

The real challenge in System Design is not understanding individual building blocks, but composing them effectively. A caching layer without observability hides failures. Messaging without idempotency causes data corruption. Load balancing without health checks routes traffic to dead instances.

Strong System Design emerges when building blocks reinforce each other. Compute scales behind load balancers. Caches protect databases. Messaging absorbs spikes. Observability exposes weak points before users notice.

This holistic view is what interviewers and real-world systems reward.

System Design Building Blocks In Interviews Versus Production

In interviews, System Design building blocks are evaluated for clarity and reasoning. You are expected to articulate trade-offs and justify choices under constraints.

In production, the same building blocks are judged by their operational impact. Poor choices increase on-call load, inflate costs, and slow development velocity.

The difference is not the blocks themselves, but how deeply you understand their interactions.

Conclusion

System Design building blocks remain the backbone of scalable software systems in 2026. While tooling evolves, the underlying concepts of load distribution, compute, storage, caching, messaging, reliability, and observability remain unchanged.

Engineers who master these building blocks gain a durable skill set that applies across industries, cloud providers, and architectures. Whether you are designing a startup MVP or a global platform, these fundamentals guide every meaningful technical decision.

If you focus on understanding how these building blocks interact, rather than memorizing patterns, you will design systems that scale not just technically, but operationally and organizationally as well.