Artificial intelligence has shifted from being a niche specialization to becoming a core part of modern software systems, which means System Design interviews increasingly include AI-driven scenarios. If you are preparing for senior backend, ML engineer, or platform roles, you will likely encounter AI System Design interview questions that test not only your architectural thinking but also your understanding of data, modeling, scalability, and production constraints.

Preparing for these interviews requires more than revisiting traditional distributed systems concepts because AI introduces new layers of complexity, such as model training pipelines, inference optimization, and continuous learning workflows.

Candidates often struggle not because they lack AI knowledge but because they fail to structure their answers effectively. Interviewers are not only assessing whether you understand machine learning but also whether you can design reliable, scalable, cost-efficient, and maintainable AI systems.

This blog walks you through the most common AI System Design interview questions, explains what interviewers expect, and provides architectural frameworks to help you answer with clarity and confidence.

Why AI Changes The Nature Of System Design Interviews

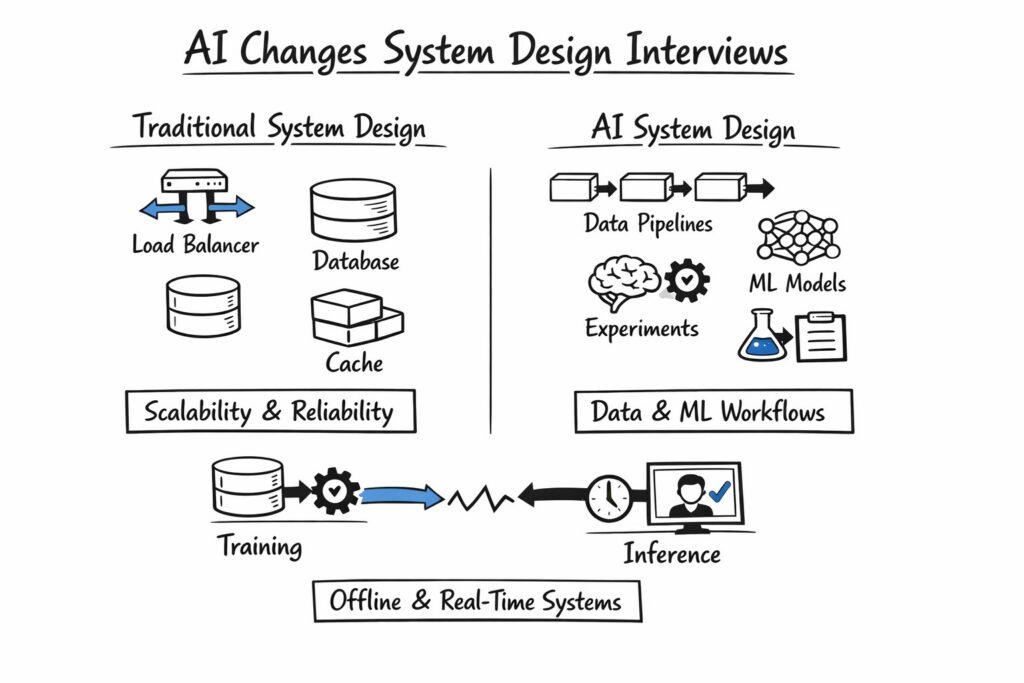

Traditional System Design interviews focus on scalability, reliability, and trade-offs across components such as load balancers, databases, and caching layers.

When AI enters the picture, the system gains additional dimensions, including data pipelines, model lifecycle management, and experimentation frameworks. As a result, AI System Design interview questions often require candidates to reason across both data infrastructure and machine learning workflows.

An AI-powered system typically includes offline and online components that interact in subtle ways. For example, training pipelines might operate asynchronously on massive datasets, while inference systems must deliver low-latency predictions to end users in real time. Interviewers expect you to understand how these components interact and how failures in one layer can cascade across the system.

The table below summarizes the key differences between traditional System Design and AI-centric System Design, which often form the foundation of AI System Design interview questions.

| Dimension | Traditional System Design | AI-Centric System Design |

| Core Focus | Scalability and Reliability | Data, Models, And Scalability |

| Data Usage | Transactional Data | Training And Inference Data |

| Deployment | Stateless Or Stateful Services | Model Versioning And Rollouts |

| Evaluation | Latency And Throughput | Accuracy, Drift, And Latency |

| Feedback Loop | Optional | Continuous Learning And Retraining |

Understanding these differences helps you structure your answer in a way that demonstrates awareness of AI-specific trade-offs rather than treating the problem as a standard distributed system.

How To Structure Answers To AI System Design Interview Questions

Before diving into example scenarios, it is essential to understand how to structure your response. Interviewers evaluate clarity of thought, prioritization, and your ability to navigate ambiguity. AI System Design interview questions are rarely about one correct answer; they are about how you reason.

Start by clarifying the problem scope, target users, and success metrics because AI systems often optimize for metrics such as precision, recall, or engagement. Once the requirements are clear, divide the architecture into high-level components such as data ingestion, data storage, feature engineering, model training, model serving, and monitoring. This layered approach demonstrates systematic thinking and reduces the risk of overlooking critical elements.

Finally, discuss trade-offs in latency, cost, scalability, and model performance, because AI systems are rarely optimized across all dimensions simultaneously. Interviewers are particularly impressed when candidates proactively mention concerns such as data drift, model versioning, and A/B testing strategies without being prompted.

Designing A Recommendation System

One of the most common AI System Design interview questions revolves around building a recommendation engine for an e-commerce or streaming platform. This question tests your ability to design both data pipelines and real-time serving infrastructure.

Start by clarifying whether the system is expected to provide batch recommendations generated offline or real-time personalized recommendations based on user behavior. Offline recommendations are easier to scale and typically involve nightly training jobs, while real-time recommendations require feature stores and low-latency inference services. The complexity of the design depends heavily on latency constraints and personalization depth.

You should describe the architecture across two primary workflows: training and inference. The training workflow collects user interaction data, processes it through feature engineering pipelines, trains collaborative filtering or deep learning models, and stores model artifacts in a registry. The inference workflow retrieves features from a feature store, loads the appropriate model version, and serves recommendations via a low-latency API.

The table below outlines a simplified architecture for a recommendation system.

| Component | Role In Training | Role In Inference |

| Data Pipeline | Collects And Cleans User Events | Streams Real-Time Events |

| Feature Store | Stores Engineered Features | Serves Features For Prediction |

| Model Training Service | Trains And Validates Models | Not Used |

| Model Registry | Stores Versioned Models | Provides the Latest Stable Model |

| Inference Service | Not Used | Generates Recommendations |

When answering AI System Design interview questions about recommendation systems, you should also address cold start problems, scaling strategies, and monitoring metrics such as click-through rate and model drift.

Designing A Real-Time Fraud Detection System

Fraud detection is another frequently asked scenario in AI System Design interview questions because it combines strict latency requirements with continuous model updates. The system must analyze transactions in milliseconds while maintaining high accuracy to avoid false positives.

Begin by clarifying throughput and latency expectations, as these directly influence infrastructure choices. A real-time fraud detection system typically requires streaming ingestion pipelines using technologies like Kafka or Pub/Sub, feature extraction layers that operate within tight latency budgets, and an inference engine optimized for low response times. You should also discuss fallback mechanisms in case the AI model service becomes unavailable.

Fraud detection systems often involve ensemble models and rule-based heuristics working together. Mentioning hybrid architectures demonstrates maturity because most production systems rely on deterministic rules alongside machine learning to manage risk. Additionally, discuss how feedback loops from confirmed fraud cases are incorporated into retraining cycles.

Designing A Large Language Model-Powered Chat System

With the rise of generative AI, many AI System Design interview questions now involve building chat systems powered by large language models. These systems introduce concerns around prompt management, cost control, latency optimization, and safety moderation.

You should clarify whether the system uses third-party APIs or self-hosted models because the architecture differs significantly. When using external APIs, the system focuses on prompt orchestration, caching, and rate limiting. When self-hosting, you must address GPU allocation, model sharding, and autoscaling strategies.

A robust LLM-based chat architecture typically includes user session management, prompt engineering services, retrieval augmentation pipelines, inference APIs, and content moderation layers. In interviews, it is beneficial to explain how you would integrate retrieval-augmented generation to improve factual accuracy and reduce hallucinations.

The table below summarizes the major architectural components of an LLM chat system.

| Layer | Purpose | Key Considerations |

| API Gateway | Handles User Requests | Rate Limiting And Authentication |

| Session Manager | Tracks Conversation State | Context Window Management |

| Retrieval Service | Fetches Relevant Documents | Vector Search And Latency |

| LLM Inference Service | Generates Responses | GPU Utilization And Cost |

| Moderation Service | Filters Unsafe Content | Compliance And Safety |

Candidates who explicitly mention token limits, caching strategies, and cost monitoring stand out in AI System Design interview questions related to generative AI systems.

Data Engineering Considerations In AI System Design

Many candidates focus heavily on models but underestimate the importance of data engineering, which is often the backbone of AI systems. AI System Design interview questions frequently probe your understanding of how data is collected, cleaned, transformed, and stored at scale.

You should discuss batch versus streaming pipelines and the role of data validation frameworks to ensure data quality. Interviewers appreciate when candidates proactively mention schema validation, anomaly detection, and version-controlled datasets. Without reliable data pipelines, even the most sophisticated model becomes unreliable.

In production AI systems, maintaining consistency between training and inference features is critical. Feature stores help enforce this consistency, and mentioning them signals practical experience with real-world ML infrastructure.

Model Lifecycle Management And Deployment Strategies

Another critical dimension of AI System Design interview questions involves managing models in production. Unlike traditional services, AI models degrade over time due to changing user behavior or external conditions.

You should explain model versioning practices, such as storing artifacts in registries and enabling rollback mechanisms. Canary deployments and shadow testing strategies are also valuable discussion points because they reduce risk when releasing new model versions. Monitoring metrics such as prediction confidence, accuracy over time, and drift detection mechanisms shows that you understand the operational challenges of AI systems.

The following table summarizes common deployment strategies for AI models.

| Deployment Strategy | Description | Risk Level |

| Blue-Green Deployment | Switch Entire Traffic To New Model | Medium |

| Canary Release | Gradually Increase Traffic To New Model | Low |

| Shadow Deployment | Run New Model In Parallel Without Affecting Users | Very Low |

Discussing these strategies demonstrates that you think beyond model training and consider production reliability, which is essential in AI System Design interview questions.

Scalability And Cost Optimization In AI Systems

AI workloads can be significantly more resource-intensive than traditional backend systems, especially when training deep learning models or serving large-scale inference traffic. AI System Design interview questions often include follow-up prompts asking how your system scales and how you would control infrastructure costs.

You should describe horizontal scaling for stateless inference services and vertical scaling or distributed training strategies for compute-heavy tasks. Additionally, mention batching requests to optimize GPU utilization and implementing caching for repeated queries. Cost-awareness is particularly important in LLM-based systems where token usage directly impacts expenses.

Demonstrating awareness of trade-offs between latency and cost signals a mature understanding of production AI systems and distinguishes strong candidates in AI System Design interview questions.

Monitoring, Evaluation, And Continuous Improvement

AI systems require monitoring beyond traditional system health metrics. AI System Design interview questions frequently test whether you understand evaluation metrics, bias detection, and drift monitoring.

You should explain how to monitor both system-level metrics, such as latency and throughput, and model-level metrics, such as precision, recall, and F1 score. Additionally, discuss how user feedback loops feed into retraining pipelines to improve performance over time. Continuous evaluation pipelines are often automated and triggered by data drift detection thresholds.

Mentioning experiment tracking tools and A/B testing frameworks further strengthens your response because it demonstrates familiarity with iterative improvement cycles common in AI products.

Common Mistakes Candidates Make

From mentoring dozens of engineers, I have noticed recurring mistakes when answering AI System Design interview questions. Many candidates dive deep into model architecture details without establishing system requirements or high-level architecture. Others neglect data governance, privacy considerations, or monitoring strategies.

Another common issue is ignoring fallback mechanisms in case the model fails or produces low-confidence predictions. Interviewers value resilience and user safety, especially in domains such as finance and healthcare. Addressing edge cases and failure modes makes your answer more complete and professional.

Final Thoughts On Preparing For AI System Design Interview Questions

Preparing for AI System Design interview questions requires integrating knowledge across distributed systems, data engineering, and machine learning operations. You must demonstrate not only that you understand how models work but also how they behave within large-scale, production-grade architectures.

Approach each question methodically by clarifying requirements, designing modular components, and discussing trade-offs across performance, cost, and reliability. If you consistently structure your answers, anticipate operational concerns, and articulate thoughtful trade-offs, you will stand out in interviews that increasingly emphasize AI-driven System Design expertise.

With deliberate practice and structured preparation, AI System Design interview questions become opportunities to showcase depth rather than obstacles to overcome.