At some point in your career, you stop being evaluated just on how well you can train a model or tune hyperparameters. Instead, interviews begin to probe how you think about systems, trade-offs, and real-world impact. This is where many experienced engineers feel a gap. You may have years of hands-on experience, but when you sit down for interviews, it is not always clear what “senior-level” expectations actually look like.

This confusion is common because the jump from mid-level to senior roles is not just about harder questions. It is about a shift in perspective. You are no longer expected to just solve problems—you are expected to define them, prioritize them, and explain your decisions clearly. That is why preparing for senior ML engineer interview questions requires a different approach than earlier stages of your career.

In this guide, you will walk through realistic interview questions along with how to approach them, what interviewers are evaluating, and how to frame your thinking like a senior engineer. The goal is not to memorize answers, but to understand how to respond in a way that reflects real-world experience and system-level thinking.

What makes senior ML interviews different

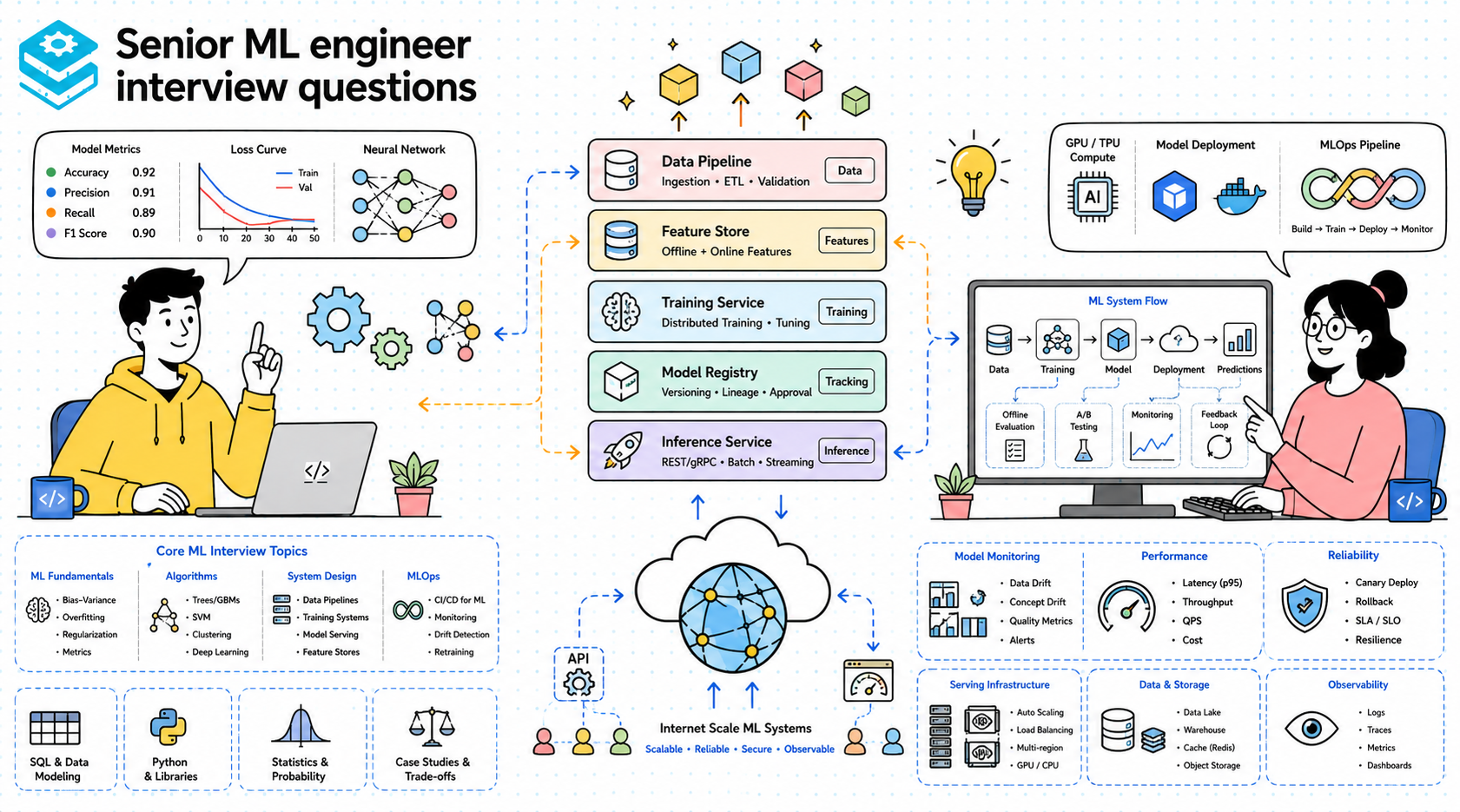

Senior ML interviews are less about isolated knowledge and more about integration. You are expected to combine machine learning with system design, data engineering, and production constraints. A junior candidate might be asked to implement logistic regression, but a senior candidate is asked how to deploy and monitor that model in a system handling millions of users.

Another key difference is the emphasis on trade-offs. At senior levels, there are rarely “correct” answers. Instead, interviewers want to see how you balance competing priorities such as latency versus accuracy, model complexity versus maintainability, and experimentation speed versus reliability. Your ability to articulate these trade-offs clearly often matters more than the specific solution you choose.

Communication also becomes central. You are expected to guide the discussion, clarify assumptions, and structure your answers logically. The interview becomes a collaborative conversation rather than a test. Strong candidates make their thinking visible, which allows interviewers to evaluate how they approach real engineering problems.

Machine learning fundamentals (applied, not theoretical)

Let’s start with foundational questions, but framed the way senior interviews expect them.

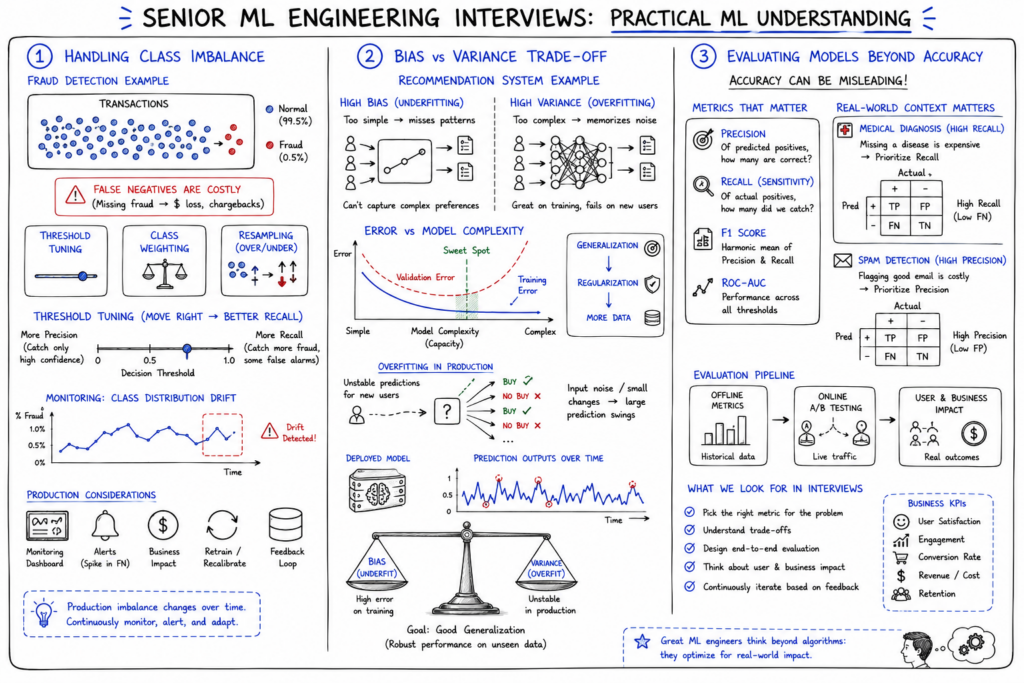

Question: How would you handle class imbalance in a production system?

A strong answer goes beyond listing techniques like oversampling or class weighting. You should start by understanding the business impact of imbalance. For example, in fraud detection, false negatives are often more costly than false positives. From there, you can discuss strategies such as resampling, cost-sensitive learning, and threshold tuning. In production, you should also mention monitoring class distribution over time, since imbalance can shift and degrade model performance.

Interviewers are evaluating whether you connect modeling decisions to real-world consequences. They want to see that you understand how imbalance affects metrics, user experience, and system behavior. Simply listing techniques without context signals a lack of practical experience.

Question: Explain the bias-variance trade-off in a real-world scenario.

At a senior level, you should frame this in terms of system behavior rather than definitions. For example, a recommendation system with high variance may perform well on training data but fail to generalize to new users. You might discuss how regularization, model complexity, and data size influence this balance. You can also connect this to deployment by explaining how overfitting leads to unstable performance in production.

Interviewers are looking for your ability to translate theory into practical implications. They expect you to explain how this trade-off affects decisions like model selection, feature engineering, and retraining frequency.

Question: How do you evaluate model performance beyond accuracy?

A strong answer includes metrics such as precision, recall, F1 score, ROC-AUC, and business-specific metrics. More importantly, you should explain when each metric matters. For example, recall is critical in medical diagnosis, while precision may matter more in spam detection. You should also discuss offline versus online evaluation, A/B testing, and user impact.

This question tests whether you understand that model evaluation is context-dependent. Interviewers want to see that you can align metrics with business goals and system behavior.

ML system design questions

Now we move into system-level thinking, which is central to senior ML engineer interview questions.

Question: Design a recommendation system for an e-commerce platform.

Start by clarifying requirements such as personalization, latency, and scale. Then outline components like data collection, feature engineering, model training, and serving infrastructure. You might discuss collaborative filtering, content-based methods, or hybrid approaches. Trade-offs include real-time versus batch recommendations and model complexity versus latency.

Interviewers evaluate your ability to structure the system and reason about trade-offs. They expect you to think about data pipelines, scalability, and user experience, not just algorithms.

Question: Design a fraud detection system.

This requires balancing accuracy and latency. You might propose a two-stage system with a fast rule-based filter followed by a more complex ML model. You should discuss feature engineering, real-time inference, and handling imbalanced data. Monitoring and retraining are also critical because fraud patterns evolve.

This question tests your ability to design systems under constraints. Interviewers look for awareness of real-world challenges such as concept drift and adversarial behavior.

Question: Design a real-time prediction pipeline.

You should describe data ingestion, feature processing, model serving, and monitoring. Technologies might include streaming systems, feature stores, and low-latency inference services. Trade-offs include consistency versus latency and batch versus streaming processing.

The focus here is on architecture and integration. Interviewers want to see that you understand how ML systems operate end-to-end.

Data engineering and pipeline questions

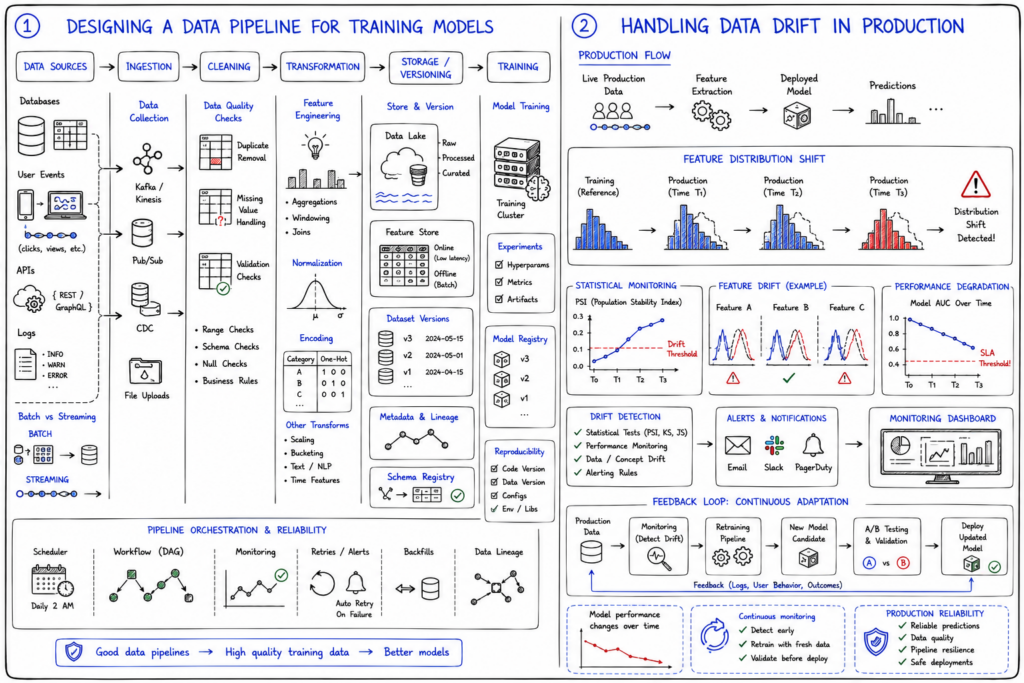

Question: How would you design a data pipeline for training models?

A strong answer includes data ingestion, cleaning, transformation, storage, and versioning. You should discuss batch versus streaming pipelines and tools for orchestration. Data quality checks and reproducibility are also important considerations.

Interviewers evaluate your understanding of data flow and reliability. They want to see that you can build pipelines that support consistent and scalable model training.

Question: How do you handle data drift in production?

You should explain monitoring techniques such as statistical tests and feature distribution tracking. When drift is detected, you might retrain the model or adjust features. You should also discuss alerting systems and feedback loops.

This question tests your ability to maintain model performance over time. Interviewers look for awareness of real-world challenges beyond initial deployment.

Model deployment and production questions

Question: How do you deploy ML models at scale?

You should discuss containerization, model serving frameworks, and scaling strategies. Options include batch inference, real-time APIs, and edge deployment. You should also consider rollback strategies and version control.

Interviewers evaluate your understanding of infrastructure and scalability. They want to see that you can deploy models reliably in production environments.

Question: How do you monitor model performance in production?

A strong answer includes tracking prediction accuracy, latency, and system health. You should discuss logging, dashboards, and alerting. Monitoring user impact and business metrics is also critical.

This question tests your ability to maintain systems after deployment. Interviewers expect you to think about long-term reliability and performance.

Comparison of interview levels

| Level | Focus areas | Depth of questions | System design involvement | Decision-making expectations |

|---|---|---|---|---|

| Junior | Algorithms, basics | Low | Minimal | Limited |

| Mid-level | Applied ML, some systems | Moderate | Partial | Some trade-offs |

| Senior | Systems, production, trade-offs | High | Extensive | Strong ownership |

Senior candidates stand out because they connect all aspects of ML systems. They do not treat modeling, data, and infrastructure as separate topics. Instead, they approach problems holistically, which is what interviewers are looking for.

Behavioral and decision-making questions

Question: Describe a time you improved a model in production.

You should explain the problem, your approach, and the impact. Focus on measurable improvements and trade-offs. Discuss challenges and how you addressed them.

Interviewers evaluate ownership and impact. They want to see that you can drive meaningful improvements in real systems.

Question: How do you decide between two competing models?

You should compare metrics, complexity, and operational cost. Consider factors like interpretability, latency, and maintainability. Explain how you align decisions with business goals.

This question tests decision-making and communication. Interviewers look for structured reasoning and clarity.

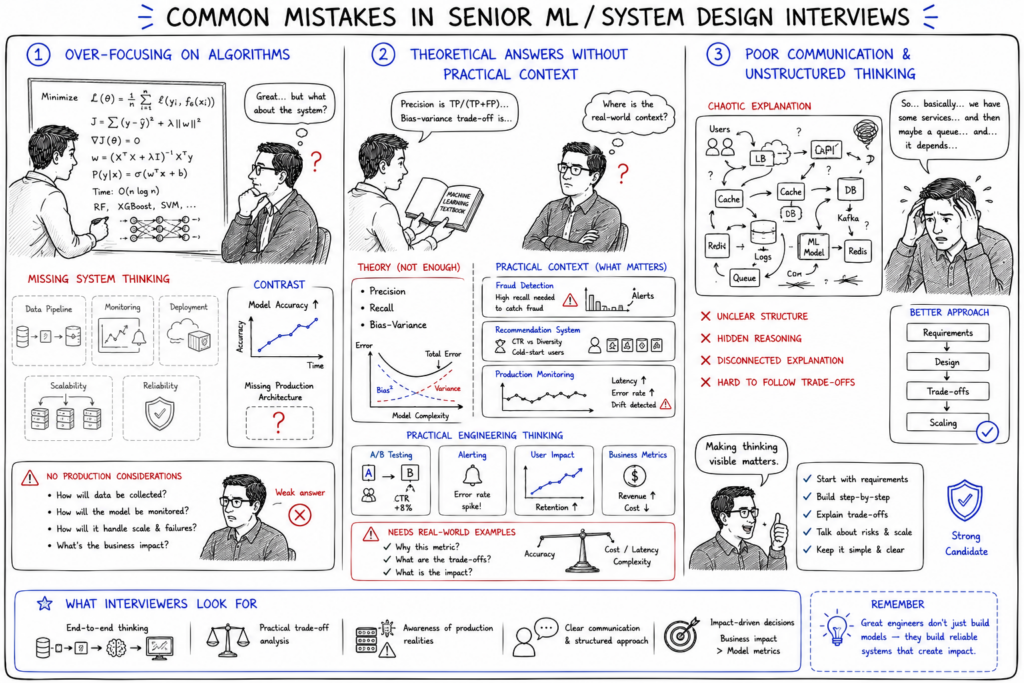

Common mistakes candidates make

One common mistake is focusing only on algorithms. While technical knowledge is important, senior roles require system-level thinking. Ignoring this aspect can make your answers feel incomplete.

Another mistake is giving theoretical answers without a practical context. Interviewers expect real-world examples and trade-offs. Purely academic responses often fail to demonstrate experience.

Finally, many candidates struggle with communication. Even strong technical answers can fall short if they are not structured clearly. Making your thinking visible is critical.

How to prepare effectively for senior ML interviews

- Strengthen system design knowledge

- Practice real-world ML problems

- Focus on explaining decisions

Strengthening system design knowledge helps you understand how ML systems operate at scale. This includes learning about distributed systems, data pipelines, and deployment strategies.

Practicing real-world problems allows you to apply concepts in context. Focus on designing systems and explaining trade-offs rather than solving isolated tasks.

Focusing on explaining decisions improves communication. Practice articulating your reasoning clearly and logically.

How to think during the interview

During the interview, structure your answers carefully. Start by clarifying requirements and outlining your approach. Then dive into details while explaining your reasoning.

Communication is key. Explain trade-offs and justify decisions clearly. If the interviewer asks follow-up questions, treat them as opportunities to refine your answer.

Stay flexible and open to feedback. Strong candidates adapt their thinking based on new information. This demonstrates real-world engineering skills.

Final worlds

Preparing for senior ML engineer interview questions requires more than technical knowledge. It requires understanding systems, making trade-offs, and communicating effectively.

If you focus on real-world problem-solving and structured thinking, you will be well-prepared for senior-level interviews. The goal is not just to answer questions, but to demonstrate how you think as an engineer.

Happy learning!