Embedded system design explained: from fundamentals to real-world systems

If you look around you right now, you are probably surrounded by embedded systems without even noticing them. Your smartwatch tracks your heart rate and displays notifications, your car constantly monitors engine conditions and braking behavior, and your washing machine adjusts water cycles based on internal sensors. These devices do not behave like laptops or smartphones with broad, flexible computing purposes. Instead, they are built to perform specific functions reliably, efficiently, and often under strict physical and timing constraints.

That is what makes system design such an important engineering discipline. It sits at the intersection of hardware, software, and system-level decision-making. You are not just writing code or assembling circuits in isolation. You are building a complete system where processor choice, memory limits, power consumption, timing behavior, and software architecture all influence one another. The result is a device that has to work predictably in the real world, not just in a development environment.

What makes embedded systems different from general computing

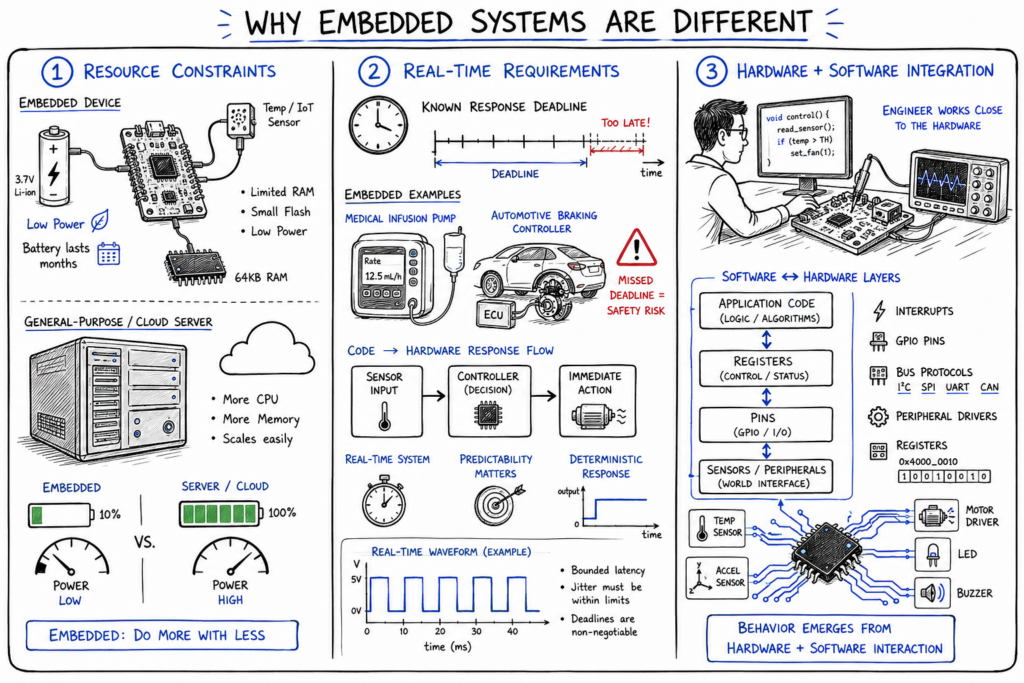

The first major difference is that embedded systems live under tighter constraints than general-purpose computers. A desktop or cloud server can usually absorb inefficiencies by adding more memory, more processing power, or more storage. An embedded device often cannot. A low-cost sensor node, for example, may run on a tiny microcontroller with limited RAM, a small flash footprint, and a battery that is expected to last months or even years. In that environment, every design choice matters because there is far less room for waste.

Another difference is that many embedded systems have real-time requirements. This does not always mean they are “fast” in the everyday sense. It means they must respond within a known time limit. In a medical infusion pump, missing a timing deadline can create a safety issue. In an automotive braking controller, delayed response is not just inconvenient; it can be dangerous. This requirement changes the way software is written because predictability matters just as much as correctness.

The third difference is the tight integration between hardware and software. In general computing, the operating system often abstracts hardware details away from the programmer. In embedded systems, that abstraction is usually thinner or sometimes absent altogether. Software engineers frequently need to understand pins, registers, interrupts, bus protocols, and peripheral behavior. This is why embedded work requires a more system-oriented mindset. You are not just building software that runs on hardware; you are designing behavior that emerges from their interaction.

Understanding embedded system design as a discipline

When people first learn about embedded systems, they often begin with the question, “What is an embedded system?” That is a useful starting point, but it only gets you so far. A more meaningful question is, “How do engineers design these systems so they actually work under real-world conditions?” That is where the discipline becomes interesting. You move from naming components to understanding trade-offs, timing, reliability, and system behavior.

At its core, embedded engineering is about making coordinated decisions across multiple layers. You have to choose hardware that fits the workload, write software that respects resource limits, and ensure that the final system behaves correctly under noise, power fluctuations, and unpredictable user interaction. A thermostat is not just a sensor plus a screen plus a microcontroller. It is a system that reads temperature data, filters noise, reacts to user settings, drives output hardware, and does so continuously with low power and high reliability.

This is why system-level thinking matters so much. You cannot make good embedded decisions by looking at isolated parts. A processor might look powerful on paper but consume too much energy. A communication protocol might be easy to implement but introduce too much latency. A flexible software architecture might be elegant but too heavy for the device. Good embedded engineers constantly think about the interaction between constraints and goals, because that interaction defines the final product.

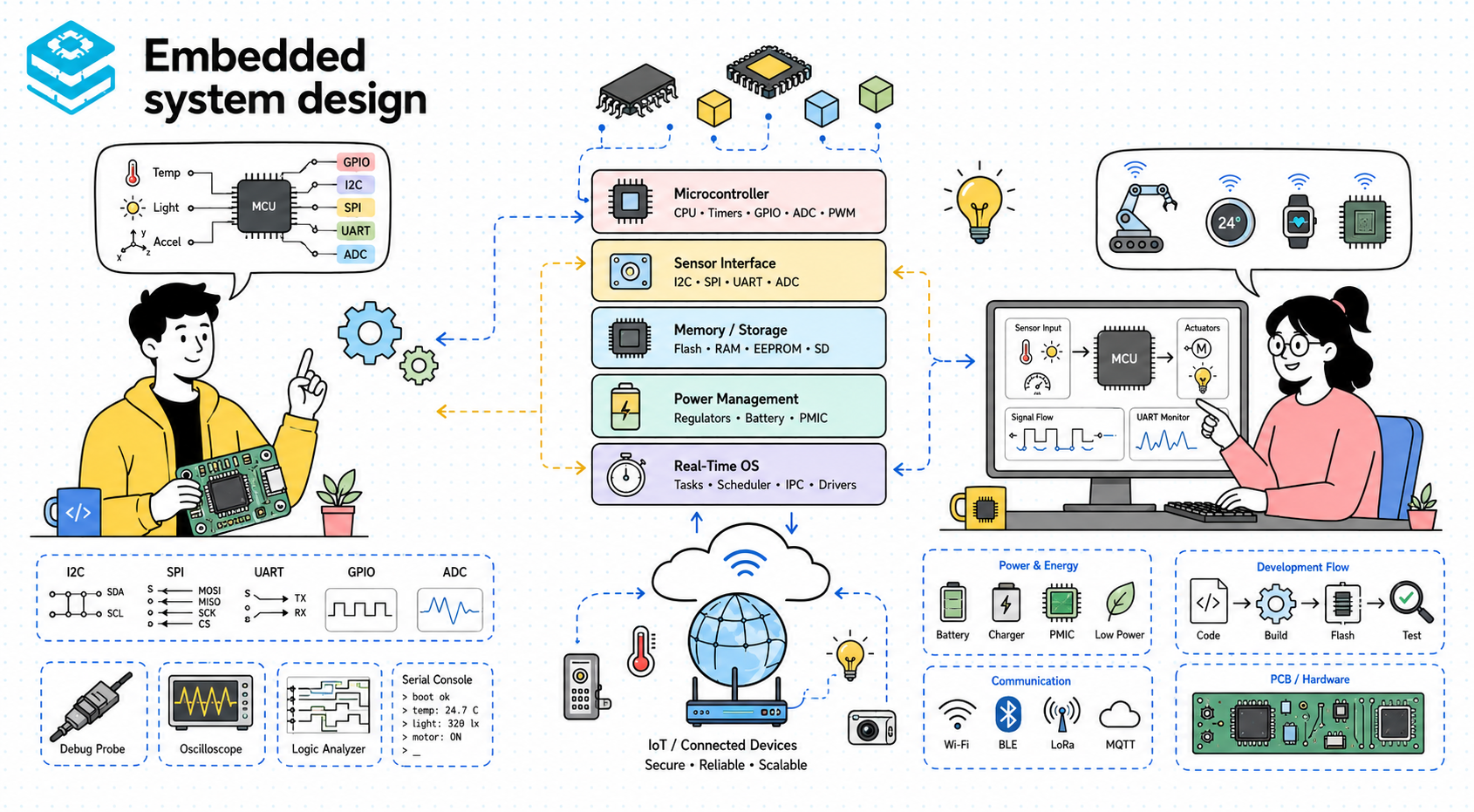

Core components of an embedded system

Most embedded systems are built around a processing unit, typically a microcontroller or a microprocessor. The distinction matters because it affects the design space significantly. A microcontroller usually combines CPU, memory, and peripherals on a single chip, making it ideal for compact, low-power systems such as wearables, home appliances, and small IoT devices. A microprocessor, on the other hand, is often part of a larger system with external memory and more complex software stacks, which makes it better suited for richer platforms like infotainment systems or advanced industrial controllers.

Memory is another core part of the design, but in embedded systems it is never just “there in the background.” Flash memory often stores firmware, while RAM supports runtime data structures, buffers, and stack usage. The amount of each directly shapes what software architecture is possible. If your RAM budget is tiny, you cannot casually allocate large buffers or use memory-heavy frameworks. Engineers often have to think carefully about stack depth, buffer sizes, and persistent storage strategies because memory limits are not theoretical—they are immediate.

Sensors and actuators complete the picture by connecting the embedded system to the physical world. Sensors bring in information such as temperature, motion, pressure, or light. Actuators create outputs such as motor control, relay switching, screen updates, or sound generation. The important point is not just that these components exist, but that they are part of a flow: the processor reads input, software interprets it, and the system responds through output devices. In a smart irrigation controller, for example, soil moisture readings influence a decision algorithm, which then drives a valve actuator. That entire loop is what the system is really designed around.

Software design in embedded systems

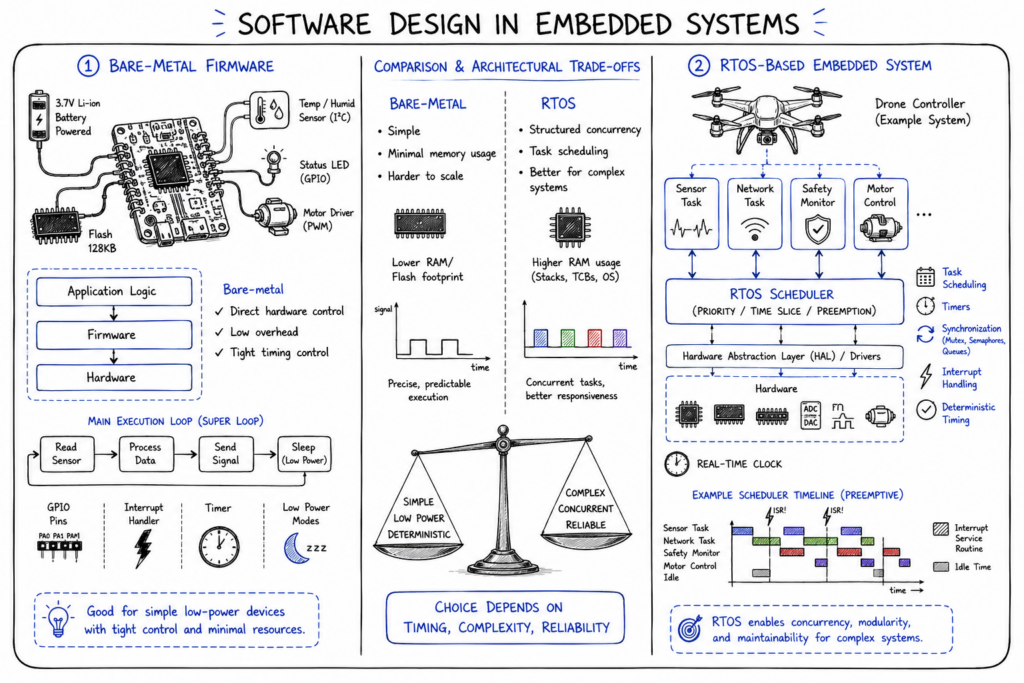

Embedded software usually begins with firmware, which is the low-level software responsible for controlling the device. Firmware initializes hardware, configures peripherals, handles interrupts, and implements the application’s core behavior. In small systems, that firmware may run directly on the hardware without any operating system at all. This is often called bare-metal programming. It gives the engineer tight control over timing and hardware interaction, which is useful when resources are extremely limited or timing constraints are strict.

As systems grow more complex, an RTOS often becomes valuable. A real-time operating system provides task scheduling, synchronization primitives, timers, and structured ways to manage concurrency while still preserving timing guarantees. This is useful in devices where multiple activities need to happen in parallel, such as reading sensors, updating communication links, and controlling outputs. In an industrial controller, for instance, one task might monitor safety inputs while another handles network communication and another updates control outputs. An RTOS helps organize that behavior more reliably than a large bare-metal loop would.

The right choice between bare-metal and RTOS depends on system needs, not ideology. A simple battery-powered sensor that wakes up, measures data, transmits, and sleeps may not need the overhead of an RTOS. A drone flight controller or automotive subsystem almost certainly benefits from a more structured execution model. The key is understanding that software architecture in embedded systems is shaped by timing, complexity, and reliability requirements. It is not just about programmer convenience.

Hardware-software interaction

One of the defining parts of embedded engineering is how software directly controls hardware. This usually happens through communication protocols and peripheral interfaces such as I2C, SPI, and UART. These protocols allow the processor to talk to sensors, displays, memory chips, and communication modules. To someone coming from pure software, this can feel very different because you are not just calling abstract APIs. You are dealing with timing, signal ordering, bus behavior, and sometimes electrical realities.

Take I2C as an example. It is commonly used for connecting multiple low-speed peripherals like sensors and real-time clocks. SPI is often used when higher speed is needed, such as for displays or external flash memory. UART remains a common choice for serial communication, debugging, and talking to communication modules like GPS or Bluetooth controllers. The point is not to memorize protocol names. It is to understand that software is constantly orchestrating physical interactions, and each protocol comes with constraints around speed, complexity, and bus topology.

This interaction is where many embedded bugs become difficult. A device might fail not because the algorithm is wrong, but because the initialization sequence for a sensor was slightly off, or because timing between transactions is inconsistent, or because an interrupt is interfering with a communication sequence. Engineers need to think beyond “does the code compile?” and ask whether the code is driving hardware correctly under real conditions. That is one of the biggest shifts in embedded work: correctness depends on the behavior of the whole system, not just the logic inside the source file.

Comparing embedded and general-purpose systems

| System type | Resource constraints | Performance requirements | System complexity | Real-time behavior |

|---|---|---|---|---|

| Embedded systems | Typically strict and fixed | Focused on efficiency and predictability | Often tightly integrated with hardware | Frequently important or essential |

| General-purpose computing systems | Usually more flexible and expandable | Focused on throughput, flexibility, and multitasking | Broader software ecosystems and abstractions | Often less deterministic |

This comparison matters because it explains why embedded engineering demands a different mindset. In general-purpose systems, you often optimize for user flexibility, broad compatibility, and rich functionality. In embedded systems, you optimize for purpose. The system is usually built to do a specific job, and it has to do that job within strict limits of cost, power, memory, and timing. That means engineering decisions are often more constrained but also more tightly connected to physical behavior.

It also explains why design choices that feel normal in desktop or cloud software may not translate well into embedded work. Dynamic allocation, large abstractions, background services, and broad software dependencies may be acceptable in general computing, but they can become liabilities in a resource-constrained controller. Embedded development requires engineers to think more explicitly about what every component costs and how every delay affects the system. That is not because embedded systems are less sophisticated. It is because they are sophisticated in a more tightly bounded environment.

Designing for constraints and reliability

A major part of embedded system design is learning to treat constraints as first-class design inputs rather than as annoying limitations. Limited memory and processing power are obvious examples, but power consumption is just as important in many devices. A remote environmental sensor running on battery or solar energy cannot behave like a plugged-in desktop system. It may need aggressive sleep modes, careful wake-up logic, and highly efficient communication patterns. In those systems, software decisions directly influence battery life, which means code quality affects hardware viability.

Reliability is equally important, especially in safety-critical environments such as automotive, aerospace, and medical devices. A consumer gadget can often tolerate an occasional glitch better than an infusion pump or engine controller can. That means engineers have to think about watchdog timers, fail-safe states, redundancy, validation logic, and fault handling much earlier in the design process. Reliability is not just a testing concern at the end. It has to be designed into the architecture from the beginning.

Real-world examples make this concrete. In an automotive electronic control unit, software may need to respond to sensor input while also detecting communication faults and ensuring safe fallback behavior if something goes wrong. In a medical monitor, sensor noise, timing errors, or display lag are not minor bugs—they can compromise trust and safety. This is why embedded engineering is fundamentally about balancing functionality with robust, predictable behavior under constrained conditions.

Real-time considerations in embedded systems

Real-time behavior is one of the most important ideas in embedded work, and also one of the most misunderstood. Real-time does not simply mean “fast.” It means the system must respond within a known deadline. In some systems, missing that deadline occasionally is acceptable but undesirable. In others, missing it even once is considered failure. This difference is what leads to soft real-time and hard real-time requirements.

A practical example is a digital audio processing system. If sound data is not processed on time, the user hears glitches or dropouts. That is a soft real-time failure because the system still runs, but quality is degraded. Compare that with an airbag control unit or anti-lock braking system, where delayed response could compromise safety. That is a hard real-time situation, and the design must guarantee timing in a much stricter way. These systems often avoid sources of unpredictability because deterministic execution matters more than feature richness.

Timing constraints influence both hardware and software decisions. Interrupt design, scheduler behavior, protocol latency, and execution time analysis all become part of system architecture. Engineers must think carefully about worst-case execution time, not just average speed. That is a major shift for people coming from general software backgrounds, where average-case performance is often the main focus. In embedded systems, missing a rare deadline can matter more than having a high average throughput.

Trade-offs in embedded system design

Every embedded system is built through trade-offs. Cost versus performance is one of the most common ones. A more powerful processor may make software development easier and enable richer functionality, but it can increase unit cost beyond what the market allows. In consumer electronics, even small cost increases matter when multiplied across large production volumes. As a result, engineers often have to find the minimum hardware that can still meet performance and reliability goals.

Power versus processing capability is another classic trade-off. A battery-powered wearable may benefit from a low-power microcontroller, but that choice may limit signal processing sophistication or wireless throughput. On the other hand, a more capable processor may improve responsiveness while draining the battery too quickly. The “best” choice depends entirely on the product’s priorities. This is why embedded decisions are rarely absolute. They are always tied to context.

A simple narrative example is a smart door lock. If you choose a highly capable processor, you may gain advanced encryption and richer logging, but you may also shorten battery life and raise manufacturing cost. If you choose a simpler controller, you preserve power and cost targets, but you may need to simplify features or optimize firmware aggressively. Good design is not about finding a perfect option. It is about choosing the trade-off that matches the product’s real goals.

Common challenges in embedded system design

One of the hardest parts of embedded engineering is debugging problems that sit between hardware and software. A fault may appear as bad data in software, but the real issue could be electrical noise, timing mismatch, protocol misconfiguration, or a race condition in interrupt handling. These problems are often difficult because they do not show up consistently. A system may work in the lab and fail in the field, or fail only under heat, vibration, or low battery conditions. That makes observability and disciplined testing extremely important.

Another challenge is simply working within constraints without losing maintainability. Engineers often optimize aggressively to fit memory or timing limits, but those optimizations can make the code harder to understand and extend. This creates a tension between efficiency and clarity. In production systems, both matter. A firmware image that fits perfectly into flash but is impossible to maintain will become costly later, especially when the product needs updates or feature changes.

Ensuring reliability over time is another major difficulty. Embedded systems often live in the field for years, and many of them operate in environments that are noisy, hot, remote, or otherwise hostile. This means validation has to go beyond basic functional testing. Engineers need to think about environmental stress, boundary conditions, power cycling, communication interruptions, and long-term degradation. Real-world embedded systems succeed not because they are elegant on paper, but because they continue to work after all of those realities show up.

Practical principles for designing embedded systems

- Design for constraints

- Keep systems simple and efficient

- Test thoroughly under real conditions

Designing for constraints means accepting early that memory, timing, power, and cost are not side concerns. They are the conditions under which the whole system must exist. When you treat them seriously from the start, you make better processor choices, cleaner software architecture decisions, and more realistic feature trade-offs. Ignoring constraints early usually means paying for them later through redesigns and failures.

Keeping systems simple and efficient does not mean stripping out every useful abstraction. It means avoiding unnecessary complexity that the device cannot afford. In embedded work, complexity is expensive because it affects timing, memory, debugging difficulty, and long-term maintainability. A simple, well-structured system is usually easier to validate and more reliable in the field than a complicated one that tries to do too much.

Testing under real conditions is critical because embedded systems interact with the physical world, not just simulated inputs. A design that works on a bench may fail with temperature variation, noisy power, unexpected user behavior, or real signal interference. That is why testing has to reflect deployment reality as closely as possible. In embedded engineering, the environment is part of the system, and your validation strategy has to respect that.

Conclusion

At its core, embedded system design is about balancing constraints, functionality, and reliability in systems that interact directly with the physical world. It is not just a combination of hardware parts and firmware modules. It is a disciplined way of thinking about how software, electronics, timing, power, and product goals all come together in one engineered system.

The more you explore embedded work, the more you realize that success comes from understanding interactions rather than memorizing isolated concepts. A good design is one that fits its environment, respects its limits, and behaves predictably under real conditions. If you keep approaching embedded systems with that mindset, you will move beyond definitions and start thinking like an engineer who can design products that actually work.

Happy learning!

- Updated 1 day ago

- Fahim

- 15 min read