System Design concepts: A complete guide for System Design interviews

System Design concepts are the foundational ideas that explain why systems behave the way they do. They are not diagrams, tools, or reference architectures. Concepts like statelessness, caching, consistency, and fault tolerance apply across every system you design, regardless of scale or domain.

Interviewers care about these concepts because they reveal how you think. A candidate who understands concepts can design unfamiliar systems confidently. A candidate who memorizes architecture struggles when requirements change.

Strong System Design answers are built from concepts upward. You start with principles, apply them to constraints, and then derive an architecture. This is the opposite of memorization.

Why concepts outlast tools and technologies

Technologies evolve quickly. Concepts do not. The same ideas that apply to modern cloud systems applied to distributed systems decades ago. Load balancing, replication, and failure handling are not new problems.

Interviewers, therefore, intentionally avoid testing tool knowledge. They want to see whether you can reason without leaning on vendor-specific solutions. Conceptual clarity is what allows you to design systems you have never seen before.

How concepts help you handle ambiguity

System Design interviews are intentionally ambiguous. Interviewers rarely give full requirements because they want to see how you reason under uncertainty. Concepts give you a stable foundation when details are missing.

When you understand concepts deeply, you can say things like:

- “This depends on consistency requirements.”

- “This bottleneck appears as traffic grows.”

- “This trade-off favors availability over correctness.”

Those statements signal senior-level thinking.

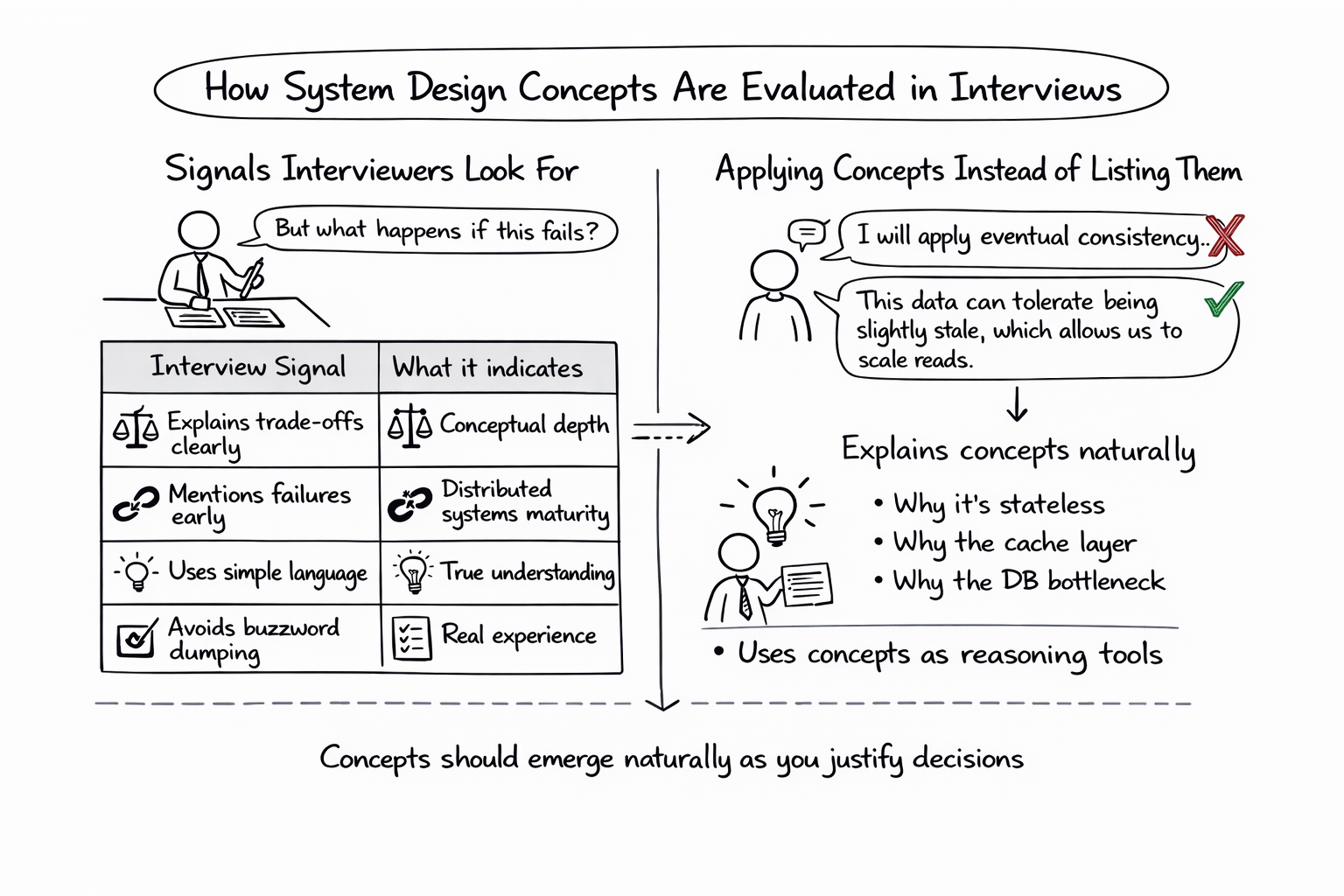

How System Design concepts are evaluated in interviews

System Design interviews are interactive discussions. Interviewers are not checking off whether you mentioned caching or load balancers. They are evaluating how and when you introduce concepts and whether you can explain their impact.

Concepts should emerge naturally as you justify decisions. For example, you don’t say “I will apply eventual consistency.” You say, “This data can tolerate being slightly stale, which allows us to scale reads.”

Signals interviewers look for

Interviewers listen for clear thinking, not fancy terminology. They pay attention to how you respond to follow-up questions and how you adjust your design when constraints change.

| Interview signal | What it indicates |

| Explains trade-offs clearly | Conceptual depth |

| Mentions failures early | Distributed systems maturity |

| Uses simple language | True understanding |

| Avoids buzzword dumping | Real experience |

These signals often matter more than the final diagram.

Applying concepts instead of listing them

A common mistake is listing concepts explicitly, as if they were checklist items. Strong candidates weave concepts into the design narrative. They explain why a system is stateless, why a cache is placed at a certain layer, or why a database becomes a bottleneck.

Interviewers reward candidates who treat concepts as reasoning tools, not vocabulary words.

System boundaries and separation of concerns

Every System Design begins with boundaries. Boundaries define where responsibilities start and end. They separate clients from services, services from data stores, and internal systems from external dependencies.

Poorly defined boundaries lead to tightly coupled systems that are hard to scale and harder to change. Interviewers often identify weak candidates by how quickly their designs become tangled.

Separation of concerns reduces complexity

Separation of concerns allows each part of the system to evolve independently. Clients should not know about database schemas. Services should not assume how other services store data. External dependencies should be isolated so their failures do not cascade.

This separation simplifies reasoning. When something fails, you know where to look. When something needs to scale, you know which boundary to extend.

Boundaries and failure isolation

Clear boundaries also limit the blast radius. If a recommendation service fails, it should not take down checkout. If a cache becomes unavailable, the system should fall back to a slower but correct path.

Interviewers often probe failure scenarios to see whether your boundaries hold under stress.

| Boundary | What it protects |

| Client ↔ Service | Internal complexity |

| Service ↔ Database | Data integrity |

| Service ↔ External API | Cascading failures |

Strong candidates define boundaries early and rely on them throughout the design.

Stateless vs stateful systems

Stateless systems do not store long-lived data between requests. Each request can be handled independently by any instance. This property enables horizontal scaling, easy recovery, and simple deployment.

Interviewers expect candidates to instinctively prefer stateless services unless there is a compelling reason not to.

Why state introduces complexity

State requires coordination. Once a service stores state, it must handle consistency, recovery, replication, and concurrency. State makes failures harder to reason about and scaling more complex.

This does not mean the state is bad. It means the state must be handled deliberately.

Externalizing state for scalability

Modern System Design pushes state into specialized systems such as databases, caches, or object storage. This allows application services to remain stateless while relying on stateful systems designed for durability and consistency.

You should be comfortable explaining why the state belongs in these systems and what trade-offs it introduces.

Trade-offs between stateless and stateful designs

Stateless designs simplify scaling but can increase latency due to external calls. Stateful designs can be faster locally but are harder to scale and recover.

| Design choice | Key benefit | Key cost |

| Stateless service | Easy scaling | External dependency |

| Stateful service | Lower latency | Coordination complexity |

Interviewers want to see that you recognize these trade-offs and choose intentionally rather than by default.

Scalability concepts: handling growth predictably

In System Design, scalability does not mean “supporting more users.” It means maintaining acceptable performance as load increases, without linear increases in cost or operational complexity. Interviewers want to see that you can reason about how a system behaves as it grows, not just whether it works at a small scale.

A system that works perfectly for a thousand users but collapses at ten thousand is not scalable, even if it technically supports more traffic.

Horizontal vs vertical scaling

Vertical scaling increases the power of a single machine. It is simple and often the first step in a system’s life. Horizontal scaling adds more machines and distributes work across them. It is more complex but provides long-term growth.

Interviewers expect candidates to understand why most large systems favor horizontal scaling despite the added coordination and operational overhead.

| Scaling type | Primary advantage | Primary limitation |

| Vertical | Simplicity | Hard upper limits |

| Horizontal | Long-term growth | Coordination complexity |

What prevents systems from scaling

Most scalability limits come from state and coordination. Shared mutable state, synchronous dependencies, and global locks restrict how far a system can scale. Stateless services, partitioned data, and asynchronous workflows remove these bottlenecks.

Strong candidates identify why a system cannot scale before proposing solutions.

Scalability as a design mindset

Interviewers look for candidates who think ahead. Even if a system does not need to scale immediately, you should be able to explain how it would scale and which components would become bottlenecks first.

This forward-looking mindset signals senior-level thinking.

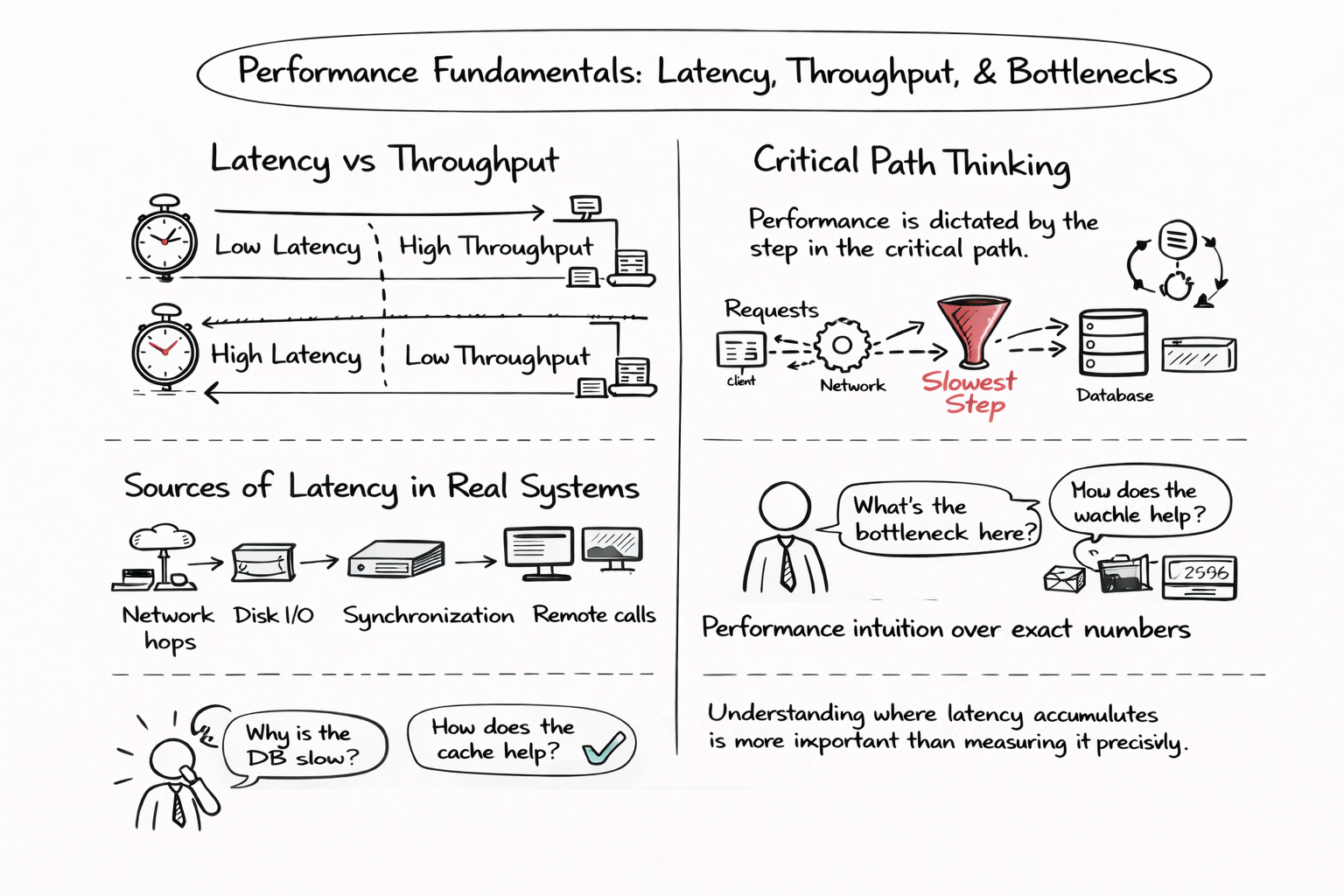

Performance fundamentals: latency, throughput, and bottlenecks

Latency measures how long a single request takes. Throughput measures how many requests a system can handle over time. Optimizing one often hurts the other. Interviewers want to see that you understand this tension.

A system with low latency but low throughput may collapse under load. A system with high throughput but high latency may feel slow to users.

Critical path thinking

Performance is dictated by the slowest step in the critical path. Adding more hardware does not help if requests are serialized through a single bottleneck.

Interviewers often ask, “What is the bottleneck here?” to test whether you can reason about performance structurally.

Sources of latency in real systems

Latency comes from many places: network hops, disk I/O, synchronization, and remote calls. Strong candidates explain performance issues in terms of system structure rather than micro-optimizations.

Understanding where latency accumulates is more important than measuring it precisely.

Performance intuition over exact numbers

Interviewers rarely expect calculations. They expect intuition. Can you explain why a database slows down under load? Can you predict how caching changes the critical path?

If you can reason clearly, the lack of numbers is not a problem.

Caching as a System Design concept

Caching exists to reduce latency and offload expensive operations. It works because many systems exhibit repeated access patterns. However, caching introduces correctness risks, which is why interviewers probe it deeply.

A strong answer treats caching as an optimization layered on top of a correct system, not as a core dependency.

Where caching is applied

Caching can exist at multiple layers: client, edge, application, or database. Each layer offers different benefits and introduces different risks.

| Cache layer | Main benefit | Main risk |

| Client | Lowest latency | Stale data |

| CDN | Global scale | Invalidation complexity |

| Application | Reduced DB load | Consistency bugs |

Interviewers expect candidates to justify why a cache exists at a specific layer.

Cache invalidation and staleness

Cache invalidation is one of the hardest problems in System Design because it ties performance to correctness. Strong candidates explain how stale data is tolerated, detected, or corrected.

Saying “we invalidate the cache” without explaining how or when is a red flag.

Failure scenarios involving caches

Caches fail. When they do, systems must fall back to a slower but correct path. Interviewers look for candidates who design cache-aside patterns rather than cache-dependent systems.

Understanding cache failure modes signals production experience.

Data modeling and data access patterns

Data modeling decisions determine how systems scale, perform, and fail. Many System Design problems are data problems in disguise. Interviewers, therefore, probe data models early and deeply.

Strong candidates design systems around data access patterns rather than features.

Read-heavy vs write-heavy workloads

Understanding whether a system is read-heavy or write-heavy informs nearly every design decision. Read-heavy systems benefit from denormalization and caching. Write-heavy systems benefit from normalization and batching.

| Workload type | Typical design choice |

| Read-heavy | Denormalized views |

| Write-heavy | Normalized schema |

| Mixed | CQRS-style separation |

Normalization vs denormalization

Normalization reduces duplication and simplifies updates. Denormalization improves read performance but introduces consistency challenges. Interviewers want to see that you understand both sides of this trade-off.

There is no universally correct choice. The right decision depends on access patterns and consistency requirements.

Data ownership and service boundaries

Each piece of data should have a single owner responsible for correctness. Sharing writable data across services leads to tight coupling and inconsistent state.

Interviewers reward candidates who clearly define ownership and enforce access through APIs rather than direct database access.

Storage and database concepts

In System Design interviews, storage choices are evaluated by guarantees, not brand names. Interviewers want to hear why a storage system fits the workload; what it guarantees about consistency, durability, latency, and scalability.

A strong answer starts with access patterns and correctness requirements, then derives the storage model. Weak answers start with a database name and work backward.

Relational vs non-relational models

Relational databases excel at strong consistency, transactions, and complex relationships. They shine when correctness is paramount, and schemas are well-defined. Non-relational systems trade some of those guarantees for scalability, flexibility, or performance.

Interviewers look for candidates who can explain these trade-offs without framing one as “better.”

Replication, durability, and read paths

Replication improves availability and read scalability but introduces lag and coordination complexity. Writes often go through a primary path; reads may be served from replicas with different freshness guarantees.

A key signal of maturity is explaining where reads come from and what freshness they guarantee, especially under failure.

Indexing and query trade-offs

Indexes speed up reads but slow down writes and increase storage cost. Interviewers probe whether candidates understand that every index is a trade-off, not a free optimization.

Strong candidates explain which queries matter most and index accordingly.

Distributed systems fundamentals

Distribution introduces new failure modes that do not exist in single-node systems. Networks are unreliable, clocks are imperfect, and components fail independently. Interviewers want to see that you design with these realities in mind.

The defining challenge is that partial failure is normal. Some parts work while others don’t.

Coordination and its hidden costs

Coordination is expensive. Leader election, consensus, and locks add latency and reduce throughput. Many scalability problems arise because systems coordinate more than necessary.

Strong candidates actively minimize coordination and explain why.

Time, ordering, and reality

Distributed systems cannot rely on a single notion of time. Ordering across machines is difficult and costly. Most systems settle for local ordering (per partition, per key) rather than global ordering.

Interviewers expect you to avoid promises of global order unless you explicitly justify the cost.

Designing for partitions

Network partitions are inevitable. Systems must choose how to behave when components cannot communicate. Interviewers care less about theory and more about what users experience during partitions.

Consistency, availability, and failure handling

Consistency is not binary. Systems can offer strong consistency, eventual consistency, or guarantees in between. The right choice depends on user expectations and business risk.

Strong candidates explain what consistency users see, not just what the database provides.

Availability trade-offs and user experience

High availability often requires tolerating stale data or partial functionality. Interviewers look for candidates who can explain how systems degrade gracefully rather than fail completely.

Graceful degradation is often a better answer than strict correctness everywhere.

Failure-handling mechanisms that work together

Retries without timeouts make failures worse. Timeouts without retries reduce availability. Idempotency enables safe retries. These mechanisms must be designed as a coordinated system.

| Mechanism | Purpose | Common pitfall |

| Timeouts | Bound waiting | Too aggressive |

| Retries | Recover from transient failures | Retry storms |

| Idempotency | Safe retries | Poor key design |

| Circuit breakers | Protect dependencies | Over-triggering |

Interviewers reward candidates who explain how these pieces interact.

Designing for the unhappy path

Strong designs explain what happens when dependencies fail, not just when they succeed. Interviewers often ask follow-ups specifically to explore failure paths.

Proactively discussing failures signals real-world experience.

How to apply System Design concepts effectively in interviews

In interviews, concepts should appear as part of your reasoning, not as declarations. You introduce statelessness when scaling is discussed. You introduce consistency when correctness is questioned.

This makes your answer feel grounded and thoughtful rather than rehearsed.

Structuring answers for clarity

A clear structure helps interviewers follow your thinking. Start with requirements, move to a high-level design, then dive into critical areas like data, scaling, and failures.

Concepts anchor each transition and justify each decision.

Adapting depth based on signals

Interviewers will guide the depth with their questions. Strong candidates adjust in real time, going deeper where probed and staying high-level elsewhere.

This adaptability is often the deciding factor between “good” and “excellent.”

Communicating trade-offs confidently

Every design decision has downsides. Interviewers trust candidates who acknowledge trade-offs and explain why they chose one side anyway.

Confidence comes from clarity, not certainty.

Using structured prep resources effectively

Use Grokking the System Design Interview on Educative to learn curated patterns and practice full System Design problems step by step. It’s one of the most effective resources for building repeatable System Design intuition.

You can also choose the best System Design study material based on your experience:

Final thoughts

System Design concepts are the mental tools that make complex systems understandable. Once internalized, they allow you to approach any System Design problem with confidence, even ones you have never seen before.

Interviews reward clarity of thought, not complexity of solutions. Candidates who reason from concepts can adapt, justify, and explain. Candidates who memorize architectures struggle when assumptions change.

If you focus on mastering concepts, boundaries, statelessness, scalability, performance, data, consistency, and failure handling, System Design interviews stop feeling like puzzles and start feeling like conversations you are prepared to lead.

- Updated 3 months ago

- Fahim

- 13 min read