Back of the Envelope Calculation: A Practical Guide for System Design Interviews

When you’re asked to do a back-of-the-envelope calculation in a System Design interview, the interviewer is not testing whether you remember formulas. They are watching how you think under uncertainty. System Design interviews are deliberately vague, and estimation questions amplify that ambiguity. You’re expected to make reasonable assumptions, explain them clearly, and move forward without freezing.

What interviewers care about most is whether you can reason about scale. They want to see if you understand how systems behave when users, traffic, and data grow by orders of magnitude. Back-of-the-envelope calculation becomes the quickest way for them to assess whether your intuition matches reality.

If you can estimate confidently, you signal that you’ve built or at least deeply thought about real systems. If you struggle, it often suggests your experience is mostly theoretical.

Why “Roughly Correct” Beats “Precisely Wrong”

In real-world engineering, perfect numbers almost never exist early in the design process. The same principle applies in interviews. A rough estimate that lands in the correct order of magnitude is far more valuable than a detailed calculation based on shaky assumptions.

Interviewers actively prefer candidates who say, “Let me sanity-check this number,” over candidates who rush into precise math without context. Back-of-the-envelope calculation shows that you understand uncertainty and can still make progress. That mindset is essential when designing distributed systems under real constraints.

How This Skill Separates Strong Candidates From Average Ones

At junior levels, interviewers might forgive weak estimation if your architecture is solid. As you move into mid-level and senior roles, estimation becomes non-negotiable. Strong candidates naturally anchor their designs in numbers. They talk about traffic before databases, capacity before sharding, and growth before optimization.

When you consistently use back-of-the-envelope calculations, your System Designs feel grounded instead of speculative. Interviewers notice that immediately, even if they never explicitly say it.

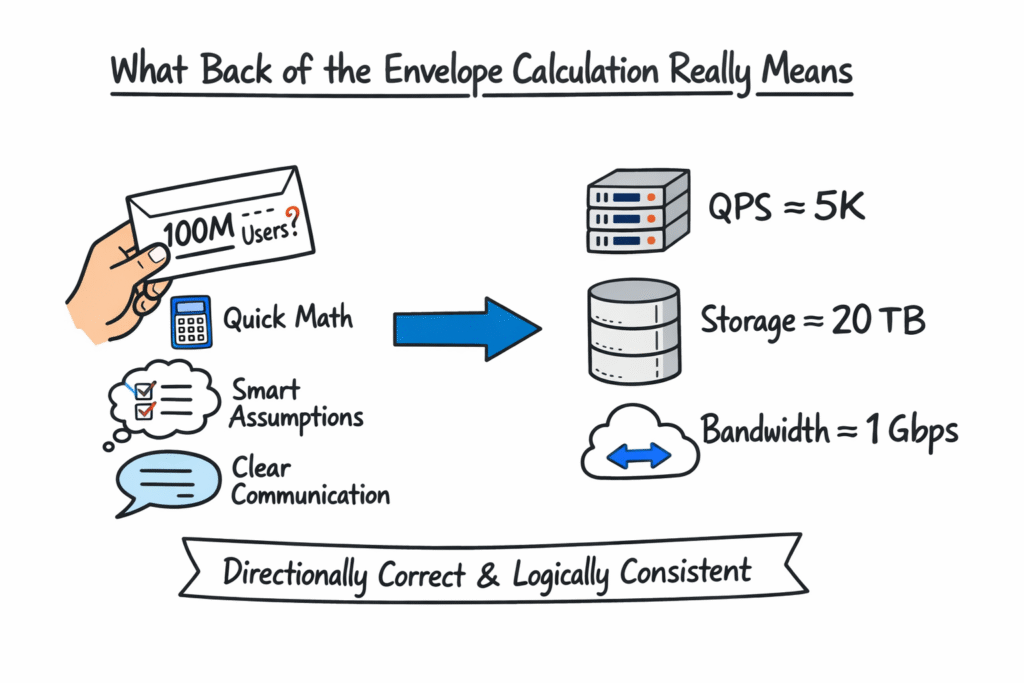

What Back-Of-The-Envelope Calculation Really Means

Back-of-the-envelope calculation is the art of making fast, reasonable estimates using simple math, realistic assumptions, and clear communication. In System Design interviews, it’s your way of translating abstract ideas like “millions of users” into concrete numbers like QPS, storage size, and bandwidth.

You’re not expected to be exact. You are expected to be directionally correct and logically consistent.

What It Is Not About

Back-of-the-envelope calculation is not about mental math tricks or memorizing constants. It’s not a test of how fast you can multiply large numbers in your head. Interviewers don’t care if you approximate a million as one million or 1.2 million. They care whether your reasoning makes sense.

It’s also not about optimizing prematurely. Estimation comes before optimization. If you jump straight to complex solutions without understanding scale, your design often collapses under scrutiny.

Why Interviewers Ask Estimation Questions Early

Interviewers often introduce back-of-the-envelope calculations early in the System Design interview because it sets the foundation for everything that follows. Traffic estimates influence architecture. Storage estimates influence database choices. Bandwidth estimates influence caching and replication strategies.

If your numbers are wildly off early on, every downstream decision becomes harder to defend. That’s why interviewers want to see you establish scale upfront, even if the estimates are rough.

The Mental Model Interviewers Expect You To Follow

One of the most important habits you need to develop is thinking in orders of magnitude rather than exact values. Whether something is ten thousand, one million, or one billion makes a massive architectural difference. Whether it’s 1.1 million or 1.3 million usually does not.

Interviewers expect you to round aggressively and focus on scale boundaries. This allows you to move faster and reason more clearly about bottlenecks.

Using Assumptions As A Strength

Many candidates hesitate to make assumptions because they fear being wrong. In reality, assumptions are unavoidable, and interviewers expect them. What matters is whether your assumptions are reasonable and whether you state them clearly.

When you say, “I’ll assume 20 percent of users are active daily,” you’re not locking yourself into a mistake. You’re giving the interviewer a chance to agree, adjust, or guide you. This turns estimation into a collaborative discussion rather than a guessing game.

Communicating Your Thought Process Clearly

Back-of-the-envelope calculation is as much about communication as it is about numbers. Interviewers want to follow your reasoning step by step. When you explain how you move from users to requests to storage, you demonstrate structured thinking.

Clear communication also makes it easier to recover if an assumption needs adjustment. A transparent thought process signals maturity and confidence, both of which matter heavily in System Design interviews.

Core Units You Must Be Fluent With

Strong candidates don’t pause when converting seconds to days or megabytes to gigabytes. These numbers form the basic vocabulary of System Design conversations. When you’re fluent with them, estimation feels natural instead of forced.

Interviewers rarely test obscure constants. They focus on whether you understand scale, growth, and constraints.

Commonly Used Time And Traffic Units

You will constantly move between time units and request rates during back-of-the-envelope calculations. Being comfortable with these conversions helps you maintain flow during the interview.

| Concept | Approximate Value |

| Seconds In A Minute | 60 |

| Minutes In An Hour | 60 |

| Hours In A Day | 24 |

| Seconds In A Day | ~86,400 |

| Seconds In A Year | ~31.5 Million |

| Average To Peak Traffic Ratio | 3x–10x |

These approximations are more than sufficient for interview-level estimation.

Data Size And Storage Units You Should Instinctively Know

Storage estimation appears in nearly every System Design interview. Whether you’re designing a feed, a chat system, or a logging pipeline, you need to reason about data size quickly.

| Unit | Approximate Size |

| 1 KB | 1,000 Bytes |

| 1 MB | 1 Million Bytes |

| 1 GB | 1 Billion Bytes |

| 1 TB | 1 Trillion Bytes |

| Text Record | 100–500 Bytes |

| Image | 100 KB–1 MB |

| Video Minute | 5–10 MB |

You don’t need perfect accuracy here. You need consistency and realism.

Memory And Disk Intuition Interviewers Expect

Interviewers also expect you to understand rough memory and disk capabilities without looking them up. You should know that memory is faster and more expensive than disk, and that caching only works if your working set fits reasonably in memory.

These intuitions guide later decisions about caching, replication, and system bottlenecks, all of which build directly on your back-of-the-envelope calculations.

A Step-By-Step Framework For Back-Of-The-Envelope Calculation

In System Design interviews, the biggest mistake you can make is treating a back-of-the-envelope calculation as a one-off math exercise. Strong candidates don’t improvise wildly from question to question. They follow a repeatable mental framework that keeps them calm, structured, and clear.

Interviewers recognize this immediately. When your estimation follows a predictable flow, it signals experience. It shows that you’ve done this before and that you’re not guessing under pressure.

Step One: Clarify What You Are Estimating

Before you touch any numbers, you need to be crystal clear about the goal of the calculation. Are you estimating traffic, storage, bandwidth, or memory? Many candidates jump straight into math without aligning on scope, which leads to confusion later.

You should explicitly state what you are trying to estimate and why it matters to the system. This anchors the discussion and gives the interviewer confidence that your calculation is purposeful, not random.

Step Two: State Assumptions Out Loud

Assumptions are the backbone of back of the envelope calculation. You should never hide them. When you state assumptions clearly, you make your reasoning easy to follow and easy to correct.

This is also where interviews become collaborative. Interviewers often respond with small adjustments that guide you toward more realistic numbers. That interaction is a positive signal, not a failure.

Step Three: Break The Problem Into Smaller Pieces

Large estimation problems become manageable when you decompose them. Instead of estimating everything at once, you move step by step. Users turn into actions. Actions turn into requests. Requests turn into data and traffic.

This decomposition mirrors how real systems are designed, which is exactly why interviewers value it.

Step Four: Estimate Each Component Independently

Once the problem is broken down, you estimate each part using simple math and rounded numbers. You avoid unnecessary precision and focus on directionally correct values.

At this stage, speed matters more than accuracy. You want to keep the conversation moving while maintaining logical consistency.

Step Five: Combine Results And Sanity Check

The final step is combining your estimates and doing a sanity check. If the final number feels wildly off, you pause and reassess. Interviewers love it when candidates self-correct instead of blindly pushing forward.

Sanity checks demonstrate intuition, which is one of the hardest skills to fake in System Design interviews.

Traffic Estimation: Users, Requests, And QPS

Traffic estimation almost always begins with users. Before you think about servers, databases, or load balancers, you need to understand how many people are using the system and how often.

You typically start with total users, then narrow down to daily active users, and finally focus on peak usage patterns. This layered approach keeps estimates realistic and defensible.

Translating User Behavior Into Requests

Users do not generate traffic just by existing. They generate traffic by performing actions. Each action usually maps to one or more backend requests.

You should explicitly describe what a typical user does and how frequently they do it. This step transforms abstract user counts into concrete request volumes, which interviewers care about far more.

Calculating Average QPS And Peak QPS

Once you have total daily requests, converting them into queries per second is straightforward. You divide by the number of seconds in a day to get average QPS.

However, interviews rarely care about averages alone. Systems must handle peaks. You should always estimate peak QPS by applying a reasonable multiplier to the average. This shows awareness of real-world usage patterns.

| Metric | Example Value |

| Daily Active Users | 10 Million |

| Requests Per User Per Day | 20 |

| Total Daily Requests | 200 Million |

| Average QPS | ~2,300 |

| Peak QPS (5x) | ~11,500 |

The exact numbers matter less than the reasoning behind them.

Common Traffic Estimation Mistakes Interviewers Notice

One frequent mistake is underestimating peak traffic. Another is assuming uniform usage throughout the day. Interviewers immediately notice when candidates ignore traffic spikes, time zones, or bursty behavior.

Avoiding these mistakes instantly strengthens your credibility.

Storage Estimation: Data Models, Growth, And Retention

Storage estimation influences nearly every architectural decision. Whether you choose a relational database, a NoSQL store, or object storage depends heavily on how much data you expect to store and how fast it grows.

Interviewers expect you to estimate storage early because it prevents unrealistic designs from forming.

Estimating Per-Record Size

Storage estimation usually starts at the record level. You estimate how much space a single record occupies, including metadata and identifiers. You do not aim for byte-perfect accuracy. You aim for a reasonable range.

Once you have a per-record estimate, scaling becomes simple multiplication.

Calculating Total Storage Requirements

After estimating record size, you multiply by the number of records generated per day and then extend the calculation across months or years. This gives you a sense of long-term growth, which interviewers value highly.

| Component | Approximate Size |

| User ID | 8 Bytes |

| Timestamp | 8 Bytes |

| Text Content | 200 Bytes |

| Metadata | 100 Bytes |

| Total Per Record | ~316 Bytes |

Rounded numbers keep calculations clean and explainable.

Accounting For Replication And Retention

Raw storage numbers are never the final answer. You must account for replication, backups, and retention policies. A system that stores one terabyte of raw data may require three or more terabytes in practice.

Calling this out explicitly shows maturity and real-world awareness.

Bandwidth And Throughput Estimation

Many candidates focus heavily on storage and forget bandwidth. In real systems, bandwidth constraints frequently surface before storage limits, especially for read-heavy applications.

Interviewers appreciate candidates who think about data movement, not just data volume.

Estimating Read And Write Traffic Separately

Bandwidth estimation becomes much clearer when you separate reads from writes. Reads often dominate traffic, especially in content-heavy systems.

You should estimate how much data is transferred per request and multiply it by the request volume. This gives you a rough bandwidth requirement that informs caching and replication decisions.

Translating Data Size Into Bandwidth

Once you know how much data is transferred per second, converting that into bandwidth is straightforward. You estimate bytes per second and then translate into megabytes or gigabytes per second.

| Metric | Example Value |

| Average Response Size | 50 KB |

| Peak QPS | 10,000 |

| Data Per Second | ~500 MB |

| Bandwidth Requirement | ~4 Gbps |

Again, the logic matters more than the exact value.

How Bandwidth Influences Design Decisions

Bandwidth estimates naturally lead into discussions about caching, CDNs, compression, and data locality. When your numbers clearly justify these choices, your System Design feels intentional rather than generic.

Interviewers often follow up bandwidth estimates with questions about optimization. Strong estimates make those conversations much easier.

Memory Estimation And Caching Considerations

Memory estimation is where back-of-the-envelope calculation starts influencing performance decisions rather than just capacity planning. In System Design interviews, memory is often the scarcest and most expensive resource. When you reason clearly about what belongs in memory and what does not, your design immediately feels more realistic.

Interviewers pay close attention to whether you treat memory as infinite or constrained. Strong candidates always acknowledge limits and make intentional tradeoffs.

Identifying The Working Set

Not all data needs to live in memory. The key question interviewers want you to answer is what portion of your data is accessed frequently enough to justify caching.

You should describe the working set in terms of user behavior. Frequently accessed profiles, popular content, or recent activity are natural cache candidates. Rarely accessed historical data is not.

This distinction shows that your caching strategy is driven by usage patterns rather than guesswork.

Estimating Cache Size And Hit Ratio

Once you define the working set, estimating cache size becomes manageable. You estimate the number of hot records and multiply by the per-record size. The result does not need to be exact. It needs to be defensible.

| Metric | Example Estimate |

| Daily Active Users | 10 Million |

| Cached Records Per User | 5 |

| Record Size | 300 Bytes |

| Total Cache Size | ~15 GB |

You should also discuss the cache hit ratio conceptually. Interviewers expect you to understand that higher hit ratios reduce backend load and improve latency. You do not need precise percentages, but you should show awareness of their impact.

How Caching Affects Downstream Systems

When caching is introduced, traffic patterns change. Backend QPS drops. Database load decreases. Latency improves. These cascading effects are exactly what interviewers want you to recognize.

By tying memory estimation back to overall system behavior, you demonstrate systems thinking rather than isolated calculation.

Latency, Availability, And Capacity Tradeoffs

Latency rarely appears as a raw number in back of the envelope calculation, but estimation still informs it indirectly. When you estimate traffic, storage, and bandwidth, you start to see where delays might occur.

High QPS suggests contention. Large payloads suggest network latency. Cache misses suggest slower responses. Interviewers expect you to connect these dots without being prompted.

Capacity Planning At Peak Load

Capacity planning is where many candidates underperform. They design systems that work at average load but collapse under peak traffic.

Back-of-the-envelope calculation helps you reason about worst-case scenarios. You estimate peak QPS, apply redundancy, and ensure the system can handle failures without degradation. This signals senior-level thinking.

Understanding Redundancy And Replication Costs

High availability is not free. Replication increases storage. Redundant services increase compute. Backups consume bandwidth.

Interviewers want to see that you understand these costs and factor them into your estimates. When you acknowledge tradeoffs explicitly, your design feels grounded and credible.

Balancing Performance, Cost, And Reliability

At its core, System Design is about tradeoffs. Back-of-the-envelope calculation gives you a quantitative way to justify those tradeoffs instead of relying on vague statements.

When you say, “This design increases cost but reduces latency under peak load,” and your numbers support it, interviewers trust your judgment.

End-To-End Worked Examples Interviewers Love

Theory explains what a back-of-the-envelope calculation is. Worked examples show that you can actually use it under interview pressure.

Interviewers often test estimation using familiar systems because they want to observe your process, not trick you with obscure domains.

Example One: Estimating A URL Shortener

When designing a URL shortener, estimation usually begins with daily URL creations and redirect traffic. You estimate how many new URLs are created per day, how often they are accessed, and how long they are retained.

From there, you estimate storage per URL, traffic per redirect, and peak QPS. The numbers are simple, but the reasoning is powerful.

Example Two: Estimating A Messaging System

Messaging systems emphasize write-heavy workloads and real-time delivery. You estimate messages sent per user per day, average message size, and peak activity periods.

This naturally leads to discussions about storage growth, bandwidth spikes, and caching recent conversations.

Example Three: Estimating A News Feed

News feed estimation focuses heavily on reads. You estimate how often users refresh, how many posts are loaded per request, and how large each post is.

Interviewers love this example because it exposes whether candidates understand fan-out, caching, and read amplification.

Across all examples, the pattern stays the same. Clear assumptions, simple math, and constant sanity checks.

How To Practice And Master Back-of-the-Envelope Calculation

Back-of-the-envelope calculation is a skill you build through repetition, not memorization. The more systems you estimate, the more natural the numbers feel.

You start recognizing patterns. Social apps look similar. Content platforms share traffic profiles. Over time, estimation becomes instinctive.

Practicing Under Interview Constraints

Practicing estimation silently is not enough. You need to practice explaining your thought process out loud. Interviews reward clarity as much as correctness.

When you rehearse, focus on pacing. You want to estimate efficiently without rushing. Confidence comes from familiarity, not speed.

Recognizing Red Flags Interviewers Notice Instantly

Interviewers quickly notice when candidates avoid numbers, hesitate excessively, or hide assumptions. They also notice when candidates blindly push forward without sanity checks.

Practicing with intentional pauses and self-correction helps you avoid these pitfalls.

How This Skill Compounds Across Interviews

Once you master back-of-the-envelope calculations, every System Design interview becomes easier. You start every design with clarity. Your decisions feel justified. Your explanations feel coherent.

This skill compounds across companies, roles, and experience levels.

Using structured prep resources effectively

Use Grokking the System Design Interview on Educative to learn curated patterns and practice full System Design problems step by step. It’s one of the most effective resources for building repeatable System Design intuition.

You can also choose the best System Design study material based on your experience:

Final Thoughts

Back-of-the-envelope calculation is not just an interview trick. It reflects how experienced engineers think about systems long before code is written. When you estimate confidently, you show that you understand scale, constraints, and tradeoffs at a deep level.

In System Design interviews, this skill often determines whether the interviewer trusts your architecture. Candidates who can reason quantitatively feel safer to hire because their decisions are grounded in reality.

If you invest time in mastering back-of-the-envelope calculation, you are not just preparing for interviews. You are training yourself to think like a System Designer. That mindset pays dividends far beyond the interview room.

- Updated 2 months ago

- Fahim

- 16 min read