Caching In System Design Explained For System Design Interviews

Caching appears in almost every serious System Design interview, regardless of the problem domain. Whether you are designing a social media feed, an e-commerce platform, a search engine, or a logging system, interviewers expect you to reason about caching naturally. This is because caching directly impacts performance, scalability, cost, and user experience.

Caching in System Design is not about memorizing cache eviction policies or naming specific tools. Interviewers care more about whether you understand why caching is needed, where it should be placed, and what trade-offs it introduces. A strong answer shows awareness of access patterns, data freshness requirements, and failure scenarios.

This article explains caching in System Design from an interview preparation perspective. It focuses on concepts, decision-making, and trade-offs rather than implementation details, helping you articulate caching strategies clearly and confidently during interviews.

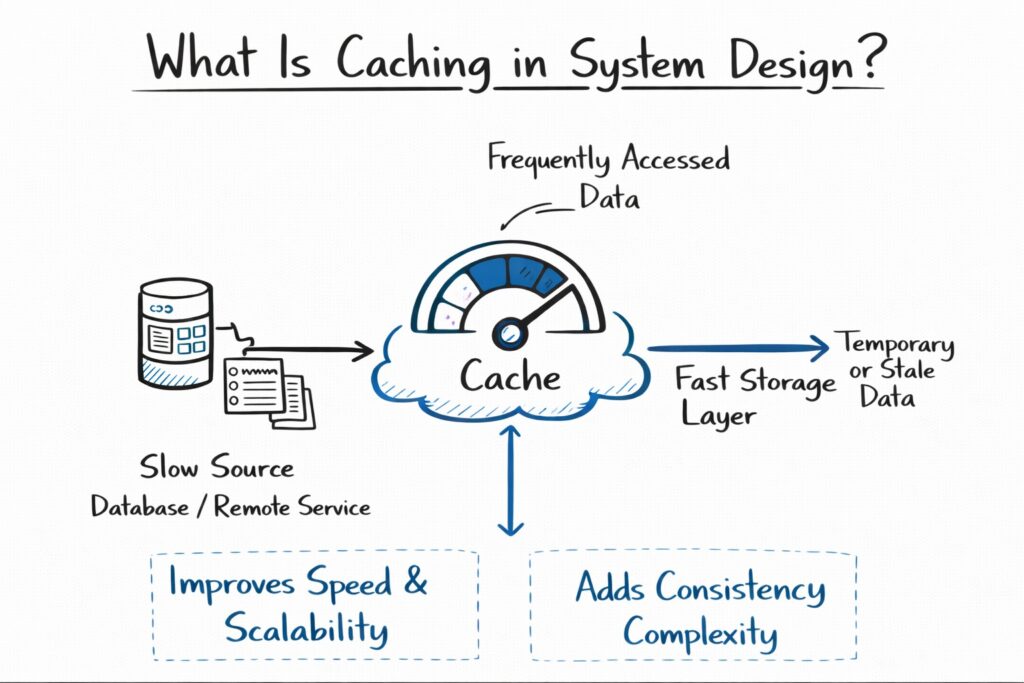

What Is Caching In System Design?

Caching is the practice of storing frequently accessed data in a faster storage layer so future requests can be served more quickly. Instead of fetching data from a slower or more expensive source, such as a database or remote service, the system retrieves it from the cache.

In System Design interviews, caching is treated as a performance optimization layer rather than a primary source of truth. Cached data is usually derived from another system and may be temporary or slightly stale.

Understanding this distinction is essential because caching improves speed and scalability but introduces complexity around consistency and invalidation.

Why Caching Is Needed In Distributed Systems

Distributed systems rely on multiple network calls, storage layers, and services. Each of these introduces latency and cost. Caching reduces the number of times the system must perform expensive operations.

From an interview perspective, caching addresses three major concerns: reducing latency for users, lowering load on backend systems, and improving overall system throughput.

Caching is often introduced after understanding access patterns. Interviewers expect you to identify which data is read frequently, which data changes infrequently, and where caching provides the most benefit.

Where Caching Fits In A System Architecture

Caching can exist at multiple layers of a system. Each layer serves a different purpose and comes with different trade-offs.

| Cache Layer | Purpose |

|---|---|

| Client-Side Cache | Reduce repeated network calls |

| CDN Cache | Serve static or semi-static content |

| Application Cache | Speed up backend processing |

| Database Cache | Reduce database read load |

In interviews, you do not need to mention every layer. Instead, you should introduce caching at the layer that best addresses the bottleneck you identify.

Client-Side And Edge Caching

Client-side caching stores data on the user’s device or browser. This reduces repeated requests to the server and improves perceived performance.

Edge caching, typically implemented through content delivery networks, caches responses closer to users geographically. This significantly reduces latency for static assets and read-heavy content.

In System Design interviews, edge caching is especially relevant for globally distributed systems such as media platforms or content-heavy applications.

Application-Level Caching

Application-level caching stores data in memory within or alongside backend services. This type of caching is often used for frequently accessed objects, configuration data, or precomputed results.

Interviewers often expect candidates to introduce application-level caching when database reads become a bottleneck. This demonstrates an understanding of how to protect core storage systems from excessive load.

| Aspect | Application Cache Impact |

|---|---|

| Latency | Significantly reduced |

| Database Load | Lowered |

| Consistency | Must be managed |

| Complexity | Increased |

Discussing these trade-offs shows thoughtful design reasoning.

Database Caching And Query Optimization

Some databases provide internal caching mechanisms that store frequently accessed rows or query results. While this improves performance, it does not eliminate the need for external caches.

In interviews, it is usually better to treat database caching as a complementary optimization rather than the primary strategy. Relying solely on database caching limits scalability and flexibility.

Mentioning this distinction shows maturity and avoids overloading the database with responsibilities.

Cache Access Patterns In System Design

Understanding access patterns is critical to designing an effective caching strategy. Interviewers often probe this area by asking which requests benefit most from caching.

Read-heavy workloads benefit significantly from caching, while write-heavy workloads require careful consideration to avoid excessive invalidation.

| Workload Type | Caching Effectiveness |

|---|---|

| Read-Heavy | Very high |

| Write-Heavy | Limited |

| Read-Mostly | High |

| Random Access | Variable |

Explaining how workload characteristics influence caching decisions is a strong interview signal.

Cache Invalidation And Data Freshness

Cache invalidation is one of the most challenging aspects of caching in System Design. Cached data may become outdated when the underlying data changes.

Interviewers often test whether candidates recognize this challenge and can articulate basic strategies for managing it.

Common approaches include time-based expiration, write-through updates, or explicit invalidation on data changes. Rather than naming these approaches mechanically, it is more important to explain how freshness requirements influence cache behavior.

For example, slightly stale data may be acceptable for a news feed but unacceptable for financial transactions.

Consistency Trade-Offs In Caching

Caching introduces a trade-off between performance and consistency. Strong consistency guarantees are harder to maintain when cached data is involved.

In interviews, candidates should explicitly discuss whether eventual consistency is acceptable and how inconsistencies affect user experience.

Framing consistency decisions in terms of product impact rather than technical purity is particularly effective.

Cache Eviction And Capacity Management

Caches have limited storage, so data must eventually be evicted. While interviewers rarely expect detailed eviction algorithms, they do expect you to acknowledge that caches cannot grow indefinitely.

Eviction policies influence which data remains cached and how effective the cache is over time.

| Eviction Concern | Design Implication |

|---|---|

| Limited Memory | Requires eviction |

| Access Patterns | Influence effectiveness |

| Hot Data | Prioritized |

| Cold Data | Removed |

This level of discussion is usually sufficient for interviews.

Failure Scenarios And Cache Reliability

Caches can fail just like any other system component. When a cache becomes unavailable, the system should degrade gracefully rather than fail completely.

In System Design interviews, you should explain what happens during a cache miss or cache outage. A common expectation is that the system falls back to the primary data source, even if performance degrades.

This demonstrates resilience thinking, which interviewers value highly.

Caching And Scalability

Caching plays a critical role in scaling systems. By reducing load on databases and backend services, caching allows systems to handle more traffic without linear increases in infrastructure cost.

From a product perspective, caching supports growth by keeping response times stable as usage increases.

In interviews, linking caching decisions to scalability goals strengthens your answer.

Caching And Cost Optimization

Caching is not just about performance. It also reduces infrastructure costs by lowering database usage, network bandwidth, and compute requirements.

This angle is especially relevant in senior-level System Design interviews, where cost awareness is an important evaluation criterion.

Discussing caching as a cost optimization strategy shows business-aligned thinking.

Common Caching Mistakes In System Design Interviews

One common mistake is introducing caching without identifying a clear bottleneck. Interviewers may question why caching is needed if performance is not yet a concern.

Another mistake is ignoring invalidation and consistency issues. Simply stating that data is cached without addressing freshness suggests a shallow understanding.

Strong candidates introduce caching deliberately and discuss its implications openly.

How Interviewers Evaluate Caching Discussions

Interviewers evaluate caching in System Design interviews based on clarity, relevance, and trade-off awareness.

| Evaluation Area | What Interviewers Look For |

|---|---|

| Placement | Cache introduced at the right layer |

| Justification | Clear performance reasoning |

| Trade-Offs | Awareness of consistency issues |

| Failure Handling | Graceful degradation |

You do not need to cover every detail, but you should demonstrate balanced reasoning.

Applying Caching To Common System Design Questions

Caching appears naturally in many interview scenarios. For example, a feed system benefits from caching precomputed results, while a search system benefits from caching popular queries.

The key is to align caching decisions with access patterns and user expectations rather than forcing caching into every design.

This adaptability is often what distinguishes strong candidates.

Preparing For Caching Questions In System Design Interviews

Effective preparation involves practicing how to explain caching decisions clearly and concisely. Rather than memorizing strategies, focus on understanding why caching is useful and when it becomes problematic.

Studying real-world systems and reflecting on where caching fits into them builds intuition that transfers well to interviews.

Conclusion

Caching in System Design is not a checkbox or an optimization you add automatically. It is a strategic tool that improves performance, scalability, and cost efficiency when used thoughtfully.

In System Design interviews, demonstrating a clear understanding of caching shows that you can reason about real-world system constraints and trade-offs. When you explain why caching is needed, where it fits, and what risks it introduces, you signal maturity and practical engineering judgment.

By treating caching as a design decision rather than a technical trick, you will deliver stronger, more convincing System Design answers and approach interviews with greater confidence.

- Updated 3 weeks ago

- Fahim

- 7 min read