Google Translate System Design: How To Design A Global Language Translation System

Google Translate System Design shows up in interviews because it sits at the intersection of distributed systems and machine learning, which is exactly where many modern production systems live. When interviewers ask you to design Google Translate, they are not testing whether you understand linguistics. They are testing whether you can reason about large-scale inference systems that serve millions of users across the globe.

Translation systems introduce complexity that traditional CRUD systems do not. Requests are compute-heavy, latency-sensitive, and dependent on machine learning models that evolve over time. This forces you to think carefully about performance, model deployment, caching, and fault tolerance, all while maintaining acceptable quality.

Another reason this problem is popular is that it allows interviewers to probe in depth selectively. At a high level, the system looks simple. A user sends text and receives a translation. As you go deeper, issues like language detection accuracy, inference latency, and model versioning quickly surface. Strong candidates uncover these layers naturally.

This question also helps interviewers distinguish between candidates who memorize architectures and those who understand system behavior. There is no single correct design for the Google Translate System Design. What matters is how you structure the problem, articulate trade-offs, and adapt when constraints change.

Most importantly, translation is a global product. That forces you to reason about regional deployment, traffic locality, and availability in a way few other interview questions do.

Defining The Problem And Core Requirements

Before discussing architecture, you need to clearly define what you are building. Google Translate System Design is fundamentally about converting input text from one language to another accurately, reliably, and at scale.

At its simplest, the system accepts text input and returns translated output. In an interview context, you should explicitly narrow the scope early. Focus on text-based translation rather than speech or image translation unless the interviewer asks otherwise. This clarity prevents unnecessary complexity.

The system must support a wide range of languages, handle variable input sizes, and respond within acceptable latency bounds. While perfect accuracy is impossible, the system should aim for consistently high-quality translations.

Just as important is stating what the system does not do. Google Translate System Design is not responsible for conversational turn-taking, real-time voice processing, or downstream decision-making. Drawing this boundary helps interviewers understand your assumptions.

Functional Requirements In Google Translate System Design

The functional requirements describe what the system must accomplish from a user perspective. Users should be able to submit text, have the system automatically detect the source language when necessary, and receive a translated response. The system must support multiple language pairs and handle both short phrases and longer passages.

While these requirements sound straightforward, they introduce challenges around inference speed, language coverage, and quality consistency.

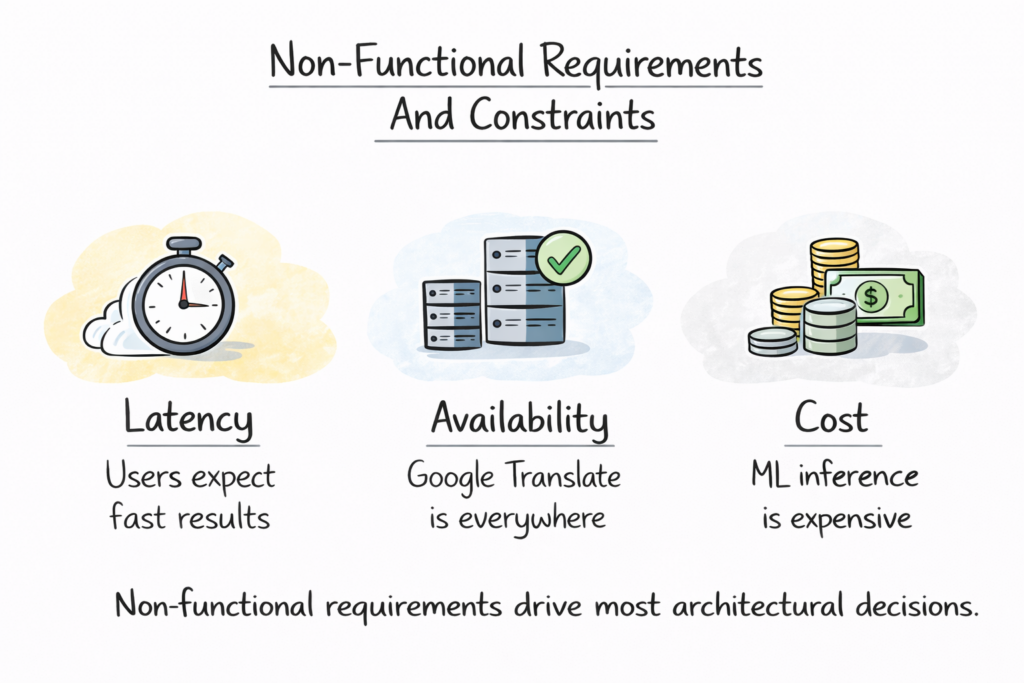

Non-Functional Requirements And Constraints

Non-functional requirements drive most architectural decisions. Latency matters because users expect translations quickly. Availability matters because the service is often embedded into other products. Cost matters because machine learning inference is expensive at scale.

The table below summarizes how interviewers typically think about these constraints.

| Requirement Type | Why It Matters |

|---|---|

| Low Latency | Translation must feel instant |

| High Availability | Global users depend on uptime |

| Scalability | Millions of concurrent requests |

| Quality Consistency | Trust in translations |

| Cost Efficiency | ML inference is expensive |

By clearly stating these requirements, you show that you are designing for real-world usage rather than an academic exercise.

High-Level Architecture Of Google Translate System Design

Once the problem is defined, you can introduce a high-level architecture. This is where you demonstrate system-level thinking without diving too early into implementation details.

At a high level, Google Translate System Design consists of a request-handling layer, a preprocessing layer, a translation inference layer, and a response generation layer. Each layer has a distinct responsibility and can scale independently.

A typical request begins when a user submits text through a client application. The request is routed to an API gateway that handles authentication, throttling, and routing. From there, the text passes through preprocessing steps before being sent to a translation model for inference.

After inference completes, the translated output is post-processed and returned to the user. This entire flow must complete within a tight latency budget, especially for interactive use cases.

Separation Of Responsibilities Across Layers

One of the strongest signals you can give in an interview is emphasizing the separation of responsibilities. Preprocessing logic should not live inside the model. Routing logic should not depend on model internals. This separation improves maintainability and allows independent evolution of components.

The table below illustrates how responsibilities are typically divided.

| Layer | Primary Responsibility |

|---|---|

| API Layer | Request handling and routing |

| Preprocessing Layer | Normalization and detection |

| Inference Layer | Translation generation |

| Post-Processing Layer | Output formatting |

Explaining the system in layers helps interviewers follow your reasoning and sets up deeper discussions later.

Input Handling And Language Detection

Input handling is the foundation of the Google Translate System Design. If the system misinterprets input or detects the wrong language, everything downstream suffers.

Users submit text in many forms. Some inputs are short phrases, others are paragraphs. Some include punctuation, emojis, or mixed languages. Before translation begins, the system must normalize this input into a consistent internal representation.

Text Normalization And Preprocessing

Normalization includes handling character encoding, removing unsupported symbols, and standardizing punctuation. This step ensures that translation models receive clean and predictable input, which improves output quality.

Tokenization often follows normalization. Text is split into tokens according to language-specific rules. This step prepares the input for model inference and can significantly impact translation accuracy.

Automatic Language Detection

Language detection determines which translation model to use. In Google Translate System Design, this step must be fast and highly accurate. Misclassification leads to poor translations and user frustration.

Language detection models typically operate independently of translation models. They analyze input text and assign probabilities to possible languages. The system then selects the most likely source language or asks the user for clarification if confidence is low.

The table below highlights key trade-offs in input handling.

| Design Choice | System Impact |

|---|---|

| Aggressive Normalization | Better consistency, risk of data loss |

| Lightweight Detection | Faster response, lower accuracy |

| Heavy Detection Models | Higher accuracy, increased latency |

| User Override Support | Better UX, added complexity |

By discussing these trade-offs, you demonstrate that you understand how early-stage decisions shape the entire system.

Translation Pipeline And Model Inference Flow

Once input text has been normalized and its language identified, the core of the Google Translate System Design comes into play. This is where raw text is transformed into a translated output through a structured pipeline.

The translation pipeline exists to shield models from variability while keeping latency under control. Instead of sending raw user input directly to models, the system routes requests through a controlled flow that ensures consistency, observability, and fallback handling.

At the center of this pipeline is the inference service. This service selects the appropriate translation model based on the source and target languages and executes inference to generate translated text.

Request Routing And Model Selection

Model selection is not trivial. Google Translate supports many language pairs, and not all pairs use the same models. Some languages rely on direct translation models, while others route through an intermediate language.

The system must map each request to the correct model version quickly. This mapping is usually handled by lightweight routing logic that references a model registry. The registry abstracts away model details and allows upgrades without disrupting traffic.

Managing Inference Latency

Inference is computationally expensive. To meet latency requirements, inference services are optimized for batch execution and hardware acceleration. Requests may be grouped briefly to improve throughput, as long as user-perceived latency remains acceptable.

The table below summarizes how inference flow decisions affect performance.

| Inference Decision | System Effect |

|---|---|

| Direct Model Routing | Lower latency |

| Intermediate Language Routing | Broader coverage, higher cost |

| Micro-Batching | Higher throughput, slight delay |

| Hardware Acceleration | Faster inference, higher cost |

Explaining this flow shows that you understand how ML systems are integrated into production architectures.

Neural Machine Translation Models And Training Considerations

Machine learning models are the heart of the Google Translate System Design, but interviews rarely require you to explain model internals. What matters is how models are trained, deployed, and managed within the system.

Translation models are trained offline using large multilingual datasets. Training is resource-intensive and happens separately from serving. Once trained, models are evaluated, versioned, and prepared for deployment.

Offline Training And Model Versioning

Training pipelines produce new model versions periodically. Each version must be tested for quality improvements and regressions. Versioning allows the system to roll out improvements gradually rather than replacing models instantly.

You should explain that production systems often run multiple model versions simultaneously. Traffic can be split between versions to compare performance and catch issues early.

Deploying Models For Inference

Deployment focuses on reliability and predictability. Models are packaged with their dependencies and deployed to inference clusters. These clusters are scaled independently based on demand.

The table below highlights key model lifecycle stages.

| Model Stage | Purpose |

|---|---|

| Offline Training | Improve translation quality |

| Evaluation | Validate improvements |

| Versioning | Enable safe rollout |

| Serving Deployment | Support live traffic |

By focusing on lifecycle management rather than model math, you align with what interviewers expect.

Handling Scale, Concurrency, And Latency

Google Translate System Design must handle massive global traffic. Requests arrive from users in different regions, time zones, and network conditions. The system must respond quickly regardless of location.

Concurrency is handled through stateless inference services that scale horizontally. Load balancers distribute requests across available instances, and auto-scaling adjusts capacity dynamically.

Regional Deployment And Traffic Locality

To reduce latency, inference services are deployed in multiple regions. Requests are routed to the nearest healthy region whenever possible. This improves responsiveness and reduces cross-region traffic costs.

However, not all models are available in every region. When a required model is unavailable locally, the system may route requests to a nearby region or fall back to a simpler model.

Latency Budgets And Timeouts

Latency budgets govern every stage of the request lifecycle. If a step exceeds its budget, the system must decide whether to wait, retry, or fall back. Timeouts prevent individual requests from monopolizing resources.

The table below summarizes strategies used to manage latency.

| Strategy | Benefit |

|---|---|

| Regional Inference | Faster responses |

| Horizontal Scaling | Handles spikes |

| Timeouts | Prevents overload |

| Fallback Models | Maintains availability |

This discussion shows that you design for responsiveness under real-world conditions.

Caching, Reuse, And Optimization Strategies

Caching plays a crucial role in Google Translate System Design. Many translation requests are repeated, especially for common phrases or popular language pairs. Recomputing these translations wastes resources.

Caching allows the system to reuse previously computed translations. Cache keys typically include the source text, source language, target language, and model version to ensure correctness.

Cache Placement And Invalidation

Caches are often placed close to inference services or at the API layer. This minimizes latency and reduces load on models. However, caching introduces complexity around invalidation.

When models are updated, cached translations may become stale. Systems must decide whether to invalidate caches aggressively or tolerate slightly outdated translations temporarily.

Trade-Offs In Cache Design

High cache hit rates improve performance and reduce cost. However, aggressive caching increases memory usage and complicates consistency guarantees. Strong candidates explain these trade-offs rather than assuming caching is always beneficial.

The table below highlights common caching decisions.

| Caching Decision | System Impact |

|---|---|

| Aggressive Caching | Lower cost, higher complexity |

| Conservative Caching | Simpler design, higher load |

| Versioned Keys | Safer rollouts |

| Regional Caches | Faster access, duplication cost |

By discussing caching thoughtfully, you demonstrate an understanding of both performance and operational complexity.

Quality Evaluation, Feedback, And Continuous Improvement

In Google Translate System Design, translation quality is never a solved problem. Languages evolve, usage patterns change, and models degrade if they are not continuously improved. That makes quality evaluation an ongoing system concern rather than a one-time task.

Quality is measured using a combination of automated metrics and human feedback. Automated metrics provide scale and consistency, while human feedback captures nuances that models struggle with. These signals feed back into training pipelines to improve future model versions.

Automated Evaluation And Monitoring

Automated evaluation allows the system to measure quality continuously. Metrics compare translated output against reference translations or evaluate fluency and adequacy statistically. While these metrics are imperfect, they are useful for detecting regressions and comparing model versions.

Monitoring systems track these metrics in production. Sudden drops in quality can indicate issues such as model misrouting or data drift.

User Feedback Loops

User feedback provides real-world insight. Users may rate translations or suggest alternatives. While feedback volume is lower than automated signals, its quality is high.

Feedback data must be carefully filtered and anonymized before being used for training. This ensures privacy while still enabling improvement.

The table below shows how different feedback sources contribute to improvement.

| Signal Source | Primary Value |

|---|---|

| Automated Metrics | Continuous monitoring |

| Human Review | Quality validation |

| User Feedback | Real-world correction |

| A/B Testing | Controlled comparison |

Discussing quality evaluation demonstrates that you understand translation as a living system.

Reliability, Fault Tolerance, And Global Availability

Google Translate System Design must operate reliably at a global scale. Users expect translations to work anytime and anywhere. Downtime or severe degradation undermines trust in the product.

Reliability starts with redundancy. Critical services are replicated across regions. Requests are routed to healthy instances automatically. If a service fails, traffic shifts without user involvement.

Handling Partial Failures

Partial failures are common in distributed systems. A single model cluster may go down while others remain healthy. The system must detect these failures quickly and adapt.

Fallback strategies are essential. If a high-quality model is unavailable, the system may use a simpler or older model temporarily. While quality may degrade slightly, availability is preserved.

Global Deployment And Traffic Routing

Traffic routing is optimized for both latency and reliability. Requests are usually served from the nearest region, but routing systems continuously evaluate health signals and adjust dynamically.

The table below summarizes core reliability mechanisms.

| Reliability Mechanism | System Benefit |

|---|---|

| Regional Replication | High availability |

| Health Checks | Fast failure detection |

| Fallback Models | Graceful degradation |

| Traffic Rerouting | Resilience under load |

Explaining these mechanisms shows that you design systems that users can depend on.

Trade-Offs, Bottlenecks, And Real-World Constraints

Every design decision in the Google Translate System Design involves trade-offs. Interviewers care deeply about whether you recognize and justify these choices.

Accuracy versus latency is a constant tension. Larger models produce better translations but increase inference time. Smaller models respond faster but sacrifice quality.

Cost is another major constraint. Inference infrastructure is expensive, and translation traffic is massive. Systems must optimize resource usage without harming user experience.

Privacy And Data Governance

Translation systems handle sensitive user text. Privacy constraints limit how data can be stored and reused. Anonymization, encryption, and access controls are non-negotiable in production systems.

These constraints often restrict aggressive caching or long-term retention, forcing careful design.

The table below highlights key trade-offs.

| Trade-Off | Impact |

|---|---|

| Accuracy Vs Latency | User experience |

| Model Size Vs Cost | Infrastructure spend |

| Caching Vs Freshness | Translation quality |

| Privacy Vs Reuse | Data utilization |

Calling out these constraints signals engineering maturity.

How To Approach Google Translate System Design In Interviews

Approaching Google Translate System Design in an interview requires structure and restraint. You should not dive into machine learning details unless prompted. Focus first on system flow and constraints.

Start by clarifying scope and requirements. Then present a high-level architecture. Let the interviewer delve into specific components such as inference, caching, or reliability.

Narrate your reasoning as you go. When discussing trade-offs, explain why you choose one option over another. Interviewers value clarity and judgment more than technical jargon.

Adapting To Follow-Up Questions

Interviewers often change constraints mid-discussion. They may ask you to reduce latency or cut costs. Treat these as opportunities to demonstrate flexibility rather than as corrections.

The table below shows how interviewers evaluate different stages.

| Interview Stage | What Is Evaluated |

|---|---|

| Problem Framing | Clarity and assumptions |

| Architecture | System thinking |

| Deep Dives | Technical judgment |

| Trade-Offs | Seniority and experience |

Approaching the interview as a collaborative design session improves outcomes significantly.

Using structured prep resources effectively

Use Grokking the System Design Interview on Educative to learn curated patterns and practice full System Design problems step by step. It’s one of the most effective resources for building repeatable System Design intuition.

You can also choose the best System Design study material based on your experience:

Final Thoughts

Google Translate System Design is a powerful interview problem because it combines distributed systems, machine learning, and global product constraints into a single discussion. It tests not only what you know, but how you reason under uncertainty.

If you approach this problem with clear structure, thoughtful trade-offs, and honest communication, you demonstrate exactly what interviewers are looking for. Mastering this design prepares you to reason about any large-scale, ML-powered system beyond translation.

- Updated 5 days ago

- Fahim

- 14 min read